Equal AI Responsible Governance Framework: A Path to Fairness

Equal AI Responsible Governance Framework is more than just a catchy phrase; it’s a roadmap for building artificial intelligence systems that are fair, transparent, and accountable. This framework acknowledges the potential for bias in AI and seeks to create a world where AI benefits everyone, regardless of background or identity.

The framework Artikels key components like data governance, model development, deployment, and monitoring. It emphasizes the importance of ethical review boards and independent audits to ensure AI systems adhere to ethical standards. The goal is to build trust in AI by making decision-making processes transparent and understandable to all stakeholders.

Defining Equal AI

The pursuit of equal AI is a crucial endeavor, striving to ensure that artificial intelligence systems are developed and deployed in a way that is fair, transparent, accountable, and inclusive. This involves addressing the potential for bias and discrimination that can arise from the design, training, and application of AI systems.

By embracing principles of equal AI, we can create a future where AI benefits all members of society equitably.

Principles of Equal AI

The core principles of equal AI guide the development and deployment of AI systems that promote fairness and equity. These principles provide a framework for ethical and responsible AI development.

- Fairness: AI systems should be designed and implemented in a way that avoids discrimination and promotes fairness for all individuals, regardless of their background, identity, or characteristics. This requires addressing biases in data, algorithms, and decision-making processes.

- Transparency: The workings of AI systems should be transparent and understandable to the public, allowing for scrutiny and accountability. This includes providing clear explanations of how decisions are made, the data used, and the potential for bias.

- Accountability: Mechanisms should be in place to ensure that those responsible for developing and deploying AI systems are accountable for their actions and the outcomes of their systems. This includes establishing clear lines of responsibility and mechanisms for redress.

- Inclusivity: AI systems should be designed and developed with the needs and perspectives of diverse groups in mind, ensuring that they are accessible and beneficial to all members of society. This requires engaging with diverse communities and stakeholders in the design and development process.

Examples of Bias in AI Systems

Bias can manifest in AI systems in various ways, leading to unfair and discriminatory outcomes. This bias can stem from the data used to train AI models, the algorithms employed, or the design choices made by developers.

- Data Bias: When training data reflects existing societal biases, AI systems can perpetuate and amplify these biases. For example, if a facial recognition system is trained primarily on images of individuals with lighter skin tones, it may perform poorly when identifying individuals with darker skin tones.

An equal AI responsible governance framework is crucial to ensure that AI technologies are developed and deployed ethically. This framework should consider the potential impact of AI on various stakeholders and address issues like bias and transparency. Tools like Google Chronicle Security Operations Preview Duet AI can be integrated into this framework to provide insights into security threats and help mitigate risks.

Ultimately, a comprehensive governance framework will be vital for harnessing the potential of AI while ensuring its responsible use for the benefit of all.

- Algorithmic Bias: Even when trained on unbiased data, algorithms themselves can exhibit bias. This can occur due to the way algorithms are designed, the choices made by developers, or the inherent limitations of the algorithms themselves. For example, an algorithm designed to predict recidivism rates may disproportionately target individuals from certain racial or socioeconomic backgrounds.

The development of an equal AI responsible governance framework is crucial for ensuring fairness and accountability in the rapidly evolving tech landscape. While we grapple with these complex issues, news of the latest tech advancements, like the every iPhone 16 event announcement just leaked including Apple Watch X, Apple Watch SE, and budget AirPods , reminds us of the need for ethical considerations as we navigate this future.

We must prioritize responsible AI development and governance to ensure that these innovations benefit all of humanity.

- Design Bias: The design choices made by developers can also contribute to bias in AI systems. For example, if a chatbot is designed to respond in a specific language or dialect, it may exclude individuals who do not speak that language or dialect.

Consequences of Unequal AI

Unequal AI can have significant negative consequences for individuals and society as a whole. These consequences can range from individual discrimination to systemic inequalities.

- Discrimination in Employment: AI systems used for hiring or promotion decisions may perpetuate existing biases, leading to discrimination against individuals from certain groups.

- Bias in Criminal Justice: AI systems used in criminal justice, such as risk assessment tools, can perpetuate racial biases, leading to unfair sentencing and increased incarceration rates for individuals from marginalized groups.

- Exacerbation of Social Inequalities: Unequal AI can exacerbate existing social inequalities by amplifying existing biases and creating new forms of discrimination.

Responsible AI Governance

A robust framework for responsible AI governance is crucial to ensure that AI systems are developed, deployed, and used ethically and effectively. This framework encompasses various components, each playing a vital role in ensuring the responsible and beneficial use of AI.

Data Governance

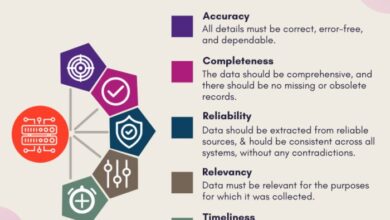

Data governance lays the foundation for responsible AI. It involves establishing clear policies and procedures for collecting, storing, using, and sharing data. This includes:

- Defining data quality standards and implementing data validation procedures to ensure data accuracy, completeness, and consistency.

- Implementing data privacy and security measures to protect sensitive information and comply with relevant regulations like GDPR and CCPA.

- Establishing clear data ownership and access controls to ensure data is used responsibly and ethically.

Model Development

Responsible AI governance extends to the development of AI models. This involves:

- Adopting ethical principles and guidelines throughout the model development process, including fairness, transparency, accountability, and non-discrimination.

- Employing robust model validation techniques to assess model performance, identify potential biases, and ensure the model meets predefined accuracy and reliability standards.

- Documenting the model development process, including data sources, algorithms used, and performance metrics, to ensure transparency and accountability.

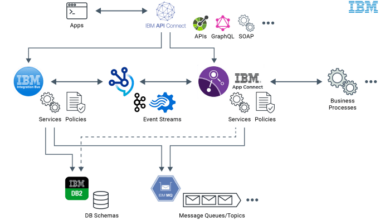

Deployment

Deployment of AI systems requires careful consideration to ensure their responsible and safe integration into existing systems and processes. This includes:

- Conducting thorough risk assessments to identify potential risks associated with the deployment of AI systems and develop mitigation strategies.

- Implementing monitoring and evaluation mechanisms to track the performance of deployed AI systems, identify potential issues, and ensure they continue to operate as intended.

- Developing clear communication strategies to inform stakeholders about the deployment of AI systems, their intended use, and any potential risks or limitations.

Monitoring

Continuous monitoring is essential for responsible AI governance. This involves:

- Tracking the performance of AI systems over time to identify any changes in performance or potential biases.

- Auditing AI systems regularly to ensure they comply with ethical guidelines and regulatory requirements.

- Implementing mechanisms for feedback and improvement, allowing for adjustments and updates to AI systems based on ongoing monitoring and evaluation.

Ethical Review Boards

Ethical review boards play a critical role in ensuring the ethical development and deployment of AI systems. These boards consist of experts from various fields, including ethics, law, technology, and social sciences. They are responsible for:

- Reviewing proposed AI projects to assess their ethical implications and potential risks.

- Providing guidance and recommendations to developers to ensure AI systems are developed and deployed ethically.

- Monitoring the ongoing use of AI systems to identify any ethical concerns and ensure they are addressed promptly.

Independent Audits

Independent audits are crucial for ensuring the accountability and transparency of AI systems. These audits involve:

- Evaluating the development, deployment, and use of AI systems against ethical guidelines and regulatory requirements.

- Identifying potential biases, risks, and ethical concerns associated with AI systems.

- Providing recommendations for improvements to enhance the ethical and responsible use of AI.

Transparency

Transparency is paramount for responsible AI governance. It involves making AI decision-making processes understandable to stakeholders. This can be achieved through:

- Providing clear explanations of how AI systems work, including the data used, the algorithms employed, and the decision-making process.

- Making available documentation and reports related to AI systems, including model performance metrics, risk assessments, and ethical considerations.

- Creating opportunities for stakeholders to engage with AI developers and ask questions about the development and deployment of AI systems.

Implementing Equal AI: Equal Ai Responsible Governance Framework

Now that we have established the foundational principles of Equal AI and Responsible AI Governance, let’s delve into the practical strategies and best practices for implementing these principles in real-world AI systems. The goal is to ensure that AI systems are developed and deployed in a way that promotes fairness, equity, and inclusivity for all.

Mitigating Bias in Data Collection and Model Training

Bias in AI systems often stems from biased data used for training. Addressing this requires a multifaceted approach, starting with data collection and extending to model training.

Building an equal AI responsible governance framework requires careful consideration of data processing and analysis. This is where the choice between Apache Spark and Hadoop becomes crucial, as both platforms offer powerful tools for handling large datasets. Understanding the differences between these two technologies, like those outlined in this comprehensive comparison apache spark vs hadoop , is essential for ensuring that our AI systems are built on a foundation of fairness and accountability.

- Ensure Data Representativeness:Collect data from diverse populations to capture the full spectrum of human experiences and avoid over-representation of certain groups. This involves actively seeking out data from underrepresented communities and ensuring that the data reflects the real-world distribution of characteristics.

- Identify and Address Data Biases:Analyze the collected data for potential biases, such as gender, race, ethnicity, socioeconomic status, or other sensitive attributes. This can be done through statistical analysis, visualization techniques, and expert review. Once identified, take steps to correct or mitigate the biases, which may involve data augmentation, re-weighting, or removing biased samples.

- Implement Data Preprocessing Techniques:Utilize techniques like data normalization, feature engineering, and data balancing to reduce the impact of biased data on model training. These methods aim to create a more balanced and representative dataset for training, minimizing the influence of skewed or biased data points.

Best Practices for Fair and Equitable AI Systems

Developing and deploying fair and equitable AI systems requires a holistic approach that encompasses various aspects of the AI lifecycle.

- Establish Clear Ethical Guidelines:Define clear ethical principles and guidelines that guide the development and deployment of AI systems. These guidelines should address issues of fairness, accountability, transparency, and privacy, ensuring that ethical considerations are integrated into every stage of the AI lifecycle.

- Conduct Fairness Audits:Regularly assess the fairness of AI systems using appropriate metrics and methodologies. This involves analyzing the system’s performance across different demographic groups to identify potential disparities and biases. Fairness audits provide valuable insights for identifying and mitigating bias, ensuring that the system treats individuals fairly and equitably.

- Promote Transparency and Explainability:Design AI systems that are transparent and explainable, allowing users to understand how the system works and why it makes certain decisions. This helps build trust and accountability, allowing users to identify potential biases and challenge unfair outcomes. Transparency and explainability are crucial for ensuring that AI systems are used responsibly and ethically.

- Foster Collaboration and Inclusivity:Encourage collaboration and participation from diverse stakeholders, including experts in ethics, social sciences, and law. This ensures that AI systems are developed and deployed in a way that reflects the needs and values of the broader community, promoting inclusivity and fairness.

Types of AI Bias and Mitigation Techniques

Understanding different types of AI bias is crucial for effectively mitigating their impact. Here’s a table outlining common types of AI bias and corresponding mitigation techniques:

| Type of Bias | Description | Mitigation Techniques |

|---|---|---|

| Representation Bias | The training data does not adequately represent the real-world population, leading to biased predictions for certain groups. | – Ensure data representativeness by collecting data from diverse populations.

|

| Measurement Bias | The data collection process introduces systematic errors, leading to inaccurate or biased measurements. | – Use standardized measurement tools and procedures.

|

| Selection Bias | The selection of training data is not random, leading to biased predictions for certain groups. | – Use random sampling techniques to ensure a representative sample.

|

| Algorithmic Bias | The algorithm itself is biased, leading to unfair or discriminatory outcomes. | – Use fairness-aware algorithms that explicitly incorporate fairness constraints.

|

The Role of Stakeholders

Equal AI, with its focus on fairness and inclusivity, necessitates a collaborative effort from diverse stakeholders. This section explores the crucial roles of developers, policymakers, users, and the public in shaping a future where AI benefits everyone equitably.

Collaboration for Responsible AI

Effective collaboration is essential to ensure responsible AI development and deployment. This involves open communication, shared goals, and a commitment to ethical principles. By working together, stakeholders can address potential biases, mitigate risks, and maximize the positive impact of AI.

- Joint Research and Development:Collaboration between researchers, developers, and policymakers can lead to the creation of AI systems that are inherently fair and unbiased. This can involve developing new algorithms, testing methodologies, and establishing ethical guidelines.

- Data Sharing and Standardization:Sharing data across stakeholders can help build more representative and inclusive datasets, reducing the risk of biased outcomes. Standardized data formats and sharing protocols can facilitate collaboration and ensure data quality.

- Public Engagement and Education:Engaging the public in discussions about AI ethics and governance is crucial. Educating users about the potential benefits and risks of AI can foster informed decision-making and promote responsible use.

Stakeholder Responsibilities

Each stakeholder group carries distinct responsibilities in promoting equal AI.

- Developers:Developers have a primary responsibility to design and build AI systems that are fair, transparent, and accountable. This includes:

- Addressing Bias:Developers must actively identify and mitigate bias in datasets, algorithms, and training processes.

- Transparency and Explainability:AI systems should be designed to be transparent, allowing users to understand how decisions are made. Explainability helps build trust and accountability.

- User-Centered Design:AI systems should be designed with the needs and perspectives of diverse users in mind, ensuring accessibility and inclusivity.

- Policymakers:Policymakers play a vital role in establishing regulations and guidelines that promote responsible AI development and use. This includes:

- Ethical Frameworks:Developing ethical frameworks for AI development and deployment that address issues of fairness, accountability, and transparency.

- Data Privacy and Security:Implementing strong data privacy and security regulations to protect user data and prevent misuse.

- Algorithmic Transparency and Auditing:Requiring transparency in algorithms and establishing mechanisms for auditing AI systems to ensure fairness and accountability.

- Users:Users have a responsibility to be informed consumers of AI and to advocate for responsible AI practices. This includes:

- Understanding AI Systems:Users should be aware of the capabilities and limitations of AI systems, as well as the potential risks and benefits.

- Data Privacy and Security:Users should be mindful of their data privacy and security and take steps to protect their information.

- Feedback and Advocacy:Users should provide feedback to developers and policymakers about their experiences with AI systems and advocate for responsible AI practices.

- Public:The public has a responsibility to engage in discussions about AI ethics and governance and to hold stakeholders accountable for responsible AI practices. This includes:

- Education and Awareness:Promoting public education and awareness about AI, its potential benefits and risks, and the importance of ethical development.

- Advocacy and Participation:Participating in public debates and advocating for policies that promote responsible AI development and use.

- Critical Thinking:Exercising critical thinking when interacting with AI systems and challenging biased or discriminatory outcomes.

Examples of Successful Collaborations

Several successful collaborations have advanced equal AI principles:

- The Partnership on AI:This consortium of industry leaders, academic institutions, and civil society organizations focuses on research and best practices for responsible AI development.

- The AI Now Institute:This research institute investigates the social implications of AI and advocates for policies that promote fairness and equity.

- The Algorithmic Justice League:This non-profit organization works to identify and address bias in AI systems and to promote algorithmic accountability.

Emerging Trends and Future Directions

The pursuit of equal AI is a dynamic and evolving field, constantly shaped by emerging technologies, evolving societal values, and the ongoing development of ethical frameworks. Understanding these trends is crucial for ensuring that AI remains a force for good, promoting fairness and inclusivity across all aspects of society.

The Rise of Explainable AI (XAI), Equal ai responsible governance framework

Explainable AI (XAI) is becoming increasingly important for building trust in AI systems. XAI aims to make AI decisions transparent and understandable to humans, enabling users to comprehend the reasoning behind AI outputs. This transparency is crucial for identifying and mitigating bias, fostering accountability, and ensuring that AI systems are fair and equitable.

“Explainable AI (XAI) is the process of making AI systems more transparent and understandable to humans.”

The Integration of AI into Diverse Domains

AI is rapidly being integrated into various sectors, from healthcare and education to finance and transportation. This widespread adoption presents both opportunities and challenges for ensuring equal AI. It is crucial to address potential biases and inequities that may arise as AI systems are deployed in diverse contexts.

The Importance of Data Governance

Data is the lifeblood of AI, and the quality and diversity of data are crucial for developing fair and unbiased AI systems. Emerging trends in data governance, such as data anonymization and differential privacy, aim to protect sensitive information while enabling responsible AI development.

The Growing Role of AI Ethics

Ethical considerations are becoming increasingly central to AI development and deployment. This includes addressing issues such as bias, fairness, accountability, and transparency. AI ethics frameworks are evolving to guide the development of AI systems that align with human values and promote societal well-being.