Generative AI UK Regulation: Navigating the Future of AI

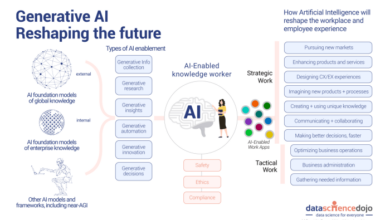

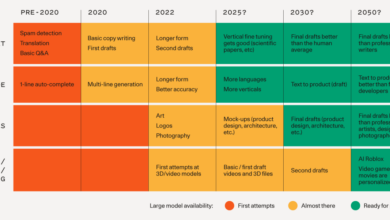

Generative AI UK regulation sets the stage for this enthralling narrative, offering readers a glimpse into a story that is rich in detail and brimming with originality from the outset. The UK, a pioneer in AI innovation, is grappling with the transformative power of generative AI, technologies like large language models (LLMs) that can create text, images, and even code, posing both exciting possibilities and complex challenges.

This blog delves into the evolving landscape of AI regulation in the UK, specifically focusing on the unique regulatory considerations surrounding generative AI. We’ll explore how the UK’s AI strategy is adapting to this new frontier, analyzing the potential impact on various sectors, and examining the ethical dilemmas that need to be addressed.

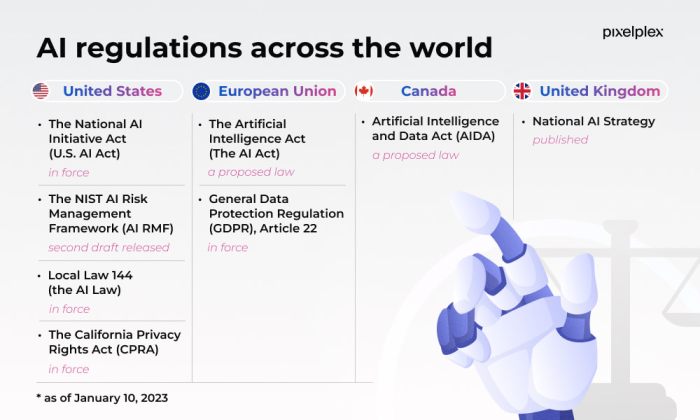

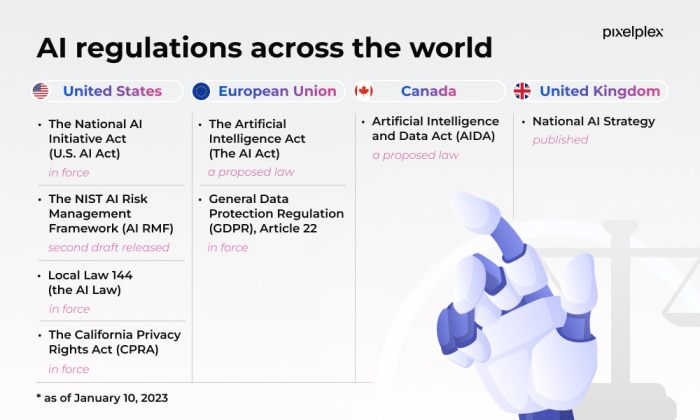

Current Landscape of AI Regulation in the UK

The UK is at the forefront of developing a comprehensive regulatory framework for AI, aiming to balance innovation with responsible development and deployment. The government’s approach prioritizes a proportionate and flexible approach, focusing on promoting ethical AI and addressing potential risks.

Key Legislation and Initiatives

The UK’s regulatory landscape for AI is evolving, with a combination of existing legislation and new initiatives.

- Data Protection Act 2018 (DPA 2018):This act lays the foundation for data privacy and security, which are crucial for responsible AI development. It sets out principles for the fair and lawful processing of personal data, including the use of AI systems.

- The National AI Strategy (2021):This strategy Artikels the UK government’s ambition to become a global AI superpower. It sets out a series of initiatives to promote innovation, investment, and responsible AI development.

- The AI Regulation: A Framework for Trust (2021):This white paper proposes a risk-based approach to AI regulation, focusing on high-risk AI systems and promoting a culture of trust.

- The AI Safety Summit (2023):The UK hosted this global summit to address the safety and security of AI systems, bringing together experts from around the world.

UK Government’s AI Strategy and Goals

The UK government’s AI strategy aims to achieve several key goals:

- Promote innovation and investment:The strategy encourages investment in AI research, development, and deployment, aiming to create a thriving AI ecosystem.

- Develop a skilled workforce:The government is investing in education and training programs to develop a skilled workforce in AI, ensuring the UK has the talent needed to compete in the global AI landscape.

- Ensure responsible AI development:The strategy emphasizes the importance of responsible AI development, addressing ethical considerations and mitigating potential risks.

- Promote international collaboration:The UK is actively engaging with other countries to develop global standards for AI regulation and governance.

Comparison with Other Countries

The UK’s approach to AI regulation is distinct from the EU’s more prescriptive approach, as seen in the proposed AI Act. The UK’s focus on a proportionate and flexible approach allows for greater innovation and adaptability, while still addressing key ethical and safety concerns.

- EU AI Act:This proposed regulation categorizes AI systems based on their risk levels, imposing stricter requirements on high-risk systems.

- UK’s Approach:The UK’s approach prioritizes a risk-based framework, focusing on high-risk AI systems while allowing for greater flexibility in regulating other applications.

Focus on Generative AI

Generative AI, particularly large language models (LLMs) like Kami, presents unique regulatory challenges in the UK. These models can produce highly realistic and convincing content, raising concerns about potential misuse and its impact on existing regulations.

The UK is grappling with how to regulate generative AI, balancing innovation with ethical considerations. As the cloud computing landscape evolves, the future of AI development hinges on robust infrastructure and responsible deployment. Gartner’s insights on the future of cloud computing, found in this article , provide valuable context for understanding how AI regulations will be shaped by the evolving cloud environment.

This interplay between regulatory frameworks and technological advancements will be crucial for harnessing the potential of generative AI while safeguarding societal values.

Regulatory Challenges Posed by Generative AI

Generative AI models pose several challenges for existing regulations. The UK’s current regulatory framework, primarily focused on traditional media and content, may not be sufficiently equipped to address the complexities of generative AI.

The UK’s approach to regulating generative AI is still developing, but it’s clear that responsible use and ethical considerations are paramount. As businesses navigate this evolving landscape, it’s crucial to have the right tools for financial planning and control. A robust budgeting software like those reviewed on this site can help businesses allocate resources effectively and stay on track with their financial goals, even as they embrace the potential of AI.

- Content Authenticity and Misinformation: LLMs can generate realistic-looking text, images, and videos, making it challenging to distinguish between genuine and AI-generated content. This raises concerns about the spread of misinformation and manipulation.

- Copyright and Intellectual Property: Generative AI models can be trained on massive datasets, including copyrighted materials. Determining ownership and licensing rights for AI-generated content can be complex, potentially leading to copyright infringement disputes.

- Bias and Discrimination: LLMs are trained on vast amounts of data, which may contain biases and prejudices. These biases can be reflected in the outputs generated by the models, potentially perpetuating discrimination and harmful stereotypes.

- Privacy and Data Protection: Generative AI models often rely on personal data for training. This raises concerns about data privacy and the potential for misuse of sensitive information.

- Transparency and Explainability: The inner workings of LLMs are often opaque, making it difficult to understand how they arrive at their outputs. This lack of transparency raises concerns about accountability and the potential for unintended consequences.

Key Regulatory Considerations for Generative AI: Generative Ai Uk Regulation

Generative AI, with its ability to create novel content, presents unique challenges for regulators. Balancing innovation with responsible development and deployment requires careful consideration of several key regulatory considerations. These considerations aim to ensure that generative AI is used ethically, safely, and for the benefit of society.

The UK’s approach to generative AI regulation is fascinating, balancing innovation with responsible development. While I’m keeping a close eye on that, I was also disappointed by the lackluster Prime Day deals on AirTags, so I decided to explore some alternatives – you can check out my recommendations on prime day airtag deals a letdown here are 3 tile alternatives id recommend.

Back to AI, I’m curious to see how the UK’s regulatory framework will shape the future of this exciting technology.

Transparency and Explainability

Transparency and explainability are crucial for understanding how generative AI systems arrive at their outputs. This is particularly important for systems that generate text, images, or other content that could be misconstrued or misused. For instance, a generative AI system used for generating news articles should be transparent about its sources and methods to avoid spreading misinformation.

- Clear Documentation:Developers should provide clear documentation detailing the training data, algorithms, and parameters used in generative AI systems. This documentation should be readily accessible to users, regulators, and researchers.

- Auditable Models:Generative AI models should be designed with auditability in mind. This means that the decision-making process should be traceable and verifiable, allowing for independent assessments of the model’s fairness, bias, and accuracy.

- User-Friendly Explanations:Generative AI systems should be able to provide understandable explanations of their outputs in a way that is accessible to users. This could involve providing summaries of the reasoning behind the generated content or highlighting key factors that influenced the output.

Risk Assessment and Mitigation

A robust framework for assessing and mitigating risks associated with generative AI models is essential. This framework should encompass various aspects, including potential biases, ethical concerns, and unintended consequences.

- Bias Detection and Mitigation:Generative AI models can inherit biases from the training data. Therefore, developers need to implement mechanisms to identify and mitigate potential biases in the model’s outputs. This could involve using diverse and representative datasets, incorporating fairness metrics into model training, and providing mechanisms for users to report and address biases.

- Safety and Security:Generative AI systems should be designed with security and safety in mind to prevent malicious use. This includes measures to prevent the generation of harmful content, such as hate speech or misinformation, and to protect the system from unauthorized access or manipulation.

- Impact Assessment:Before deploying generative AI systems, developers should conduct comprehensive impact assessments to understand the potential social, economic, and environmental consequences. This assessment should consider the potential risks and benefits of the system and identify strategies for mitigating negative impacts.

Data Governance and Privacy

Generative AI systems rely heavily on large datasets for training. Data governance and privacy regulations play a crucial role in addressing concerns related to the use of personal data in generative AI.

- Data Anonymization and Privacy Preservation:Data used to train generative AI models should be anonymized or pseudonymized to protect individual privacy. This involves removing or masking personally identifiable information while preserving the data’s utility for model training.

- Data Access and Control:Individuals should have control over their data and be informed about how it is used in generative AI systems. This includes the right to access, correct, or delete their data. Data access should be restricted to authorized users and purposes.

- Compliance with Data Protection Laws:Developers and users of generative AI systems must comply with relevant data protection laws, such as the UK’s Data Protection Act 2018 and the General Data Protection Regulation (GDPR). This includes ensuring that data is processed lawfully, fairly, and transparently.

Impact on Different Sectors

Generative AI, with its ability to create new content, has the potential to revolutionize various sectors in the UK. This technology presents both opportunities and challenges, requiring careful consideration of its regulatory landscape.

Healthcare

Generative AI can transform healthcare by assisting in drug discovery, personalized medicine, and medical imaging analysis. It can analyze large datasets to identify patterns and predict potential drug targets, accelerating drug development. Personalized medicine can benefit from AI-powered algorithms that tailor treatment plans based on individual patient characteristics.

However, concerns exist about data privacy, algorithmic bias, and the potential for misuse of AI-generated medical information.

Regulatory Challenges and Opportunities

- Data Privacy: Strict regulations like the UK’s General Data Protection Regulation (GDPR) need to be considered when training AI models on sensitive patient data. Ensuring data anonymization and appropriate consent mechanisms is crucial.

- Algorithmic Bias: AI models trained on biased data can perpetuate existing health disparities. Regulatory frameworks need to address bias detection and mitigation strategies.

- Transparency and Accountability: Clear guidelines are needed to ensure transparency in AI-powered medical decisions, allowing healthcare professionals to understand the reasoning behind AI recommendations.

- Liability: Determining liability in case of AI-related medical errors is a complex issue that requires careful consideration.

Finance

Generative AI can be used in finance for fraud detection, risk assessment, and personalized financial advice. AI models can analyze vast amounts of financial data to identify patterns indicative of fraudulent activity, enhancing fraud prevention. Generative AI can also create synthetic financial data for testing and training purposes, reducing the reliance on real-world data.

Regulatory Challenges and Opportunities

- Financial Stability: AI-powered financial systems need to be robust and resilient to prevent disruptions to financial markets. Regulators must ensure that AI models are not susceptible to manipulation or malicious attacks.

- Market Manipulation: The use of generative AI to create synthetic financial data could potentially be used for market manipulation. Regulatory frameworks need to address the potential for misuse.

- Transparency and Explainability: Financial institutions need to be able to explain the reasoning behind AI-driven decisions to regulators and customers, fostering trust and accountability.

- Cybersecurity: AI systems in finance are vulnerable to cyberattacks. Regulatory frameworks need to address cybersecurity measures to protect financial data and systems.

Education, Generative ai uk regulation

Generative AI can revolutionize education by providing personalized learning experiences, creating adaptive learning platforms, and automating administrative tasks. AI-powered tutors can adapt to individual student needs, providing personalized feedback and support. Generative AI can also create educational content, such as interactive simulations and personalized learning materials.

Regulatory Challenges and Opportunities

- Educational Equity: AI-powered educational systems need to be designed to ensure equitable access and outcomes for all students, regardless of their background or socioeconomic status.

- Data Privacy: Student data collected for AI-powered education systems must be protected in accordance with data privacy regulations.

- Teacher Training: Teachers need to be equipped with the skills and knowledge to effectively integrate AI into their classrooms.

- Ethical Considerations: It is crucial to consider the ethical implications of using AI in education, such as potential bias in AI-powered assessments and the potential for AI to replace human interaction.

Regulatory Landscape Comparison

| Sector | Key Regulatory Considerations |

|---|---|

| Healthcare | Data privacy (GDPR), algorithmic bias, transparency, liability |

| Finance | Financial stability, market manipulation, transparency, cybersecurity |

| Education | Educational equity, data privacy, teacher training, ethical considerations |

Future Directions

The UK’s approach to regulating generative AI is still evolving, with the government actively exploring various avenues to ensure responsible innovation and mitigate potential risks. The UK’s AI strategy is expected to be further developed to address the unique challenges posed by generative AI.

This section will explore potential future regulatory frameworks, government strategies, and recommendations for fostering responsible development in this field.

Potential Regulatory Frameworks

The UK government might consider various approaches to regulate generative AI, including:

- Expanding Existing Frameworks:The UK’s existing AI regulations, such as the National AI Strategy, could be extended to cover generative AI. This approach would involve adapting existing principles and guidelines to address the specific characteristics of generative AI. For example, the existing guidelines on data governance and bias could be strengthened to ensure transparency and fairness in the development and deployment of generative AI systems.

- Sector-Specific Regulations:Tailored regulations could be introduced for specific sectors where generative AI is likely to have a significant impact. For instance, regulations could be developed for the financial sector to address risks associated with AI-generated financial reports or for the healthcare sector to ensure the responsible use of AI in medical diagnostics.

- Sandboxes and Experimental Regulations:The UK government might consider creating regulatory sandboxes for generative AI, allowing for experimentation and innovation within a controlled environment. This approach could facilitate the development and deployment of generative AI while minimizing potential risks. It would also provide valuable insights into the effectiveness of different regulatory approaches.

Further Development of AI Strategy

To address the challenges posed by generative AI, the UK government might consider the following steps:

- Promoting Research and Development:The government could invest in research and development to advance understanding of the ethical and societal implications of generative AI. This would include funding research on techniques to mitigate risks such as bias, misinformation, and misuse.

- Enhancing Public Awareness:Public awareness campaigns could be launched to educate the public about generative AI, its capabilities, and its potential risks. This would help foster informed discussions about the responsible use of these technologies.

- Strengthening International Cooperation:Collaboration with international partners is crucial for developing effective regulatory frameworks for generative AI. This would involve sharing best practices, coordinating research, and working together to address global challenges associated with these technologies.

Recommendations for Responsible Innovation

To foster responsible innovation and ethical development of generative AI, the following recommendations are crucial:

- Transparency and Explainability:Developers of generative AI systems should be transparent about the data used to train their models and the processes involved in generating outputs. This would help users understand the limitations and potential biases of these systems.

- Accountability and Oversight:Clear mechanisms for accountability and oversight should be established to ensure the responsible use of generative AI. This could involve developing ethical guidelines, establishing independent review boards, or implementing mechanisms for reporting and redress.

- Human-Centered Design:Generative AI systems should be designed with human users in mind, ensuring that they are safe, accessible, and beneficial. This would involve considering the potential impact of these systems on various user groups and incorporating user feedback into the design process.