NVIDIA Announces TensorRT-LLM: A Game Changer for AI

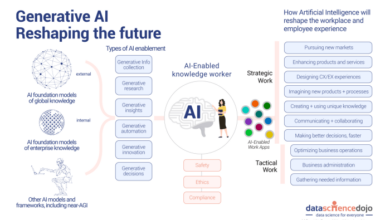

Nvidia announces tensorrt llm – NVIDIA Announces TensorRT-LLM sets the stage for this enthralling narrative, offering readers a glimpse into a story that is rich in detail and brimming with originality from the outset. The announcement of TensorRT-LLM, NVIDIA’s new framework for optimizing large language models, has sent ripples through the AI community.

This powerful tool promises to revolutionize how we deploy and utilize these complex models, unlocking a new era of AI performance and efficiency.

TensorRT-LLM is designed to address the unique challenges associated with deploying large language models, which often require significant computational resources and can be slow to execute. NVIDIA’s framework tackles these issues head-on, offering a streamlined approach to optimizing these models for maximum performance.

The key lies in its ability to leverage the power of NVIDIA’s GPUs, enabling significant speedups and reduced latency.

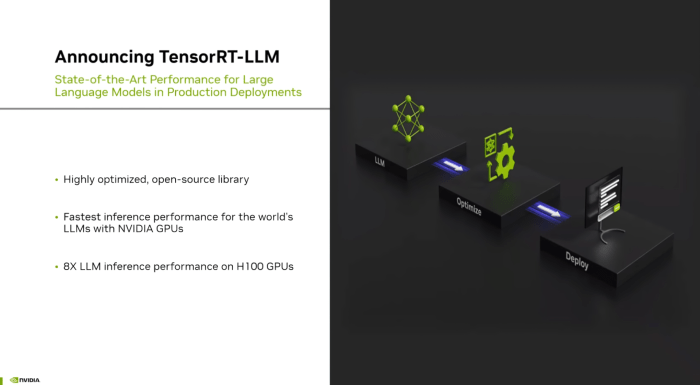

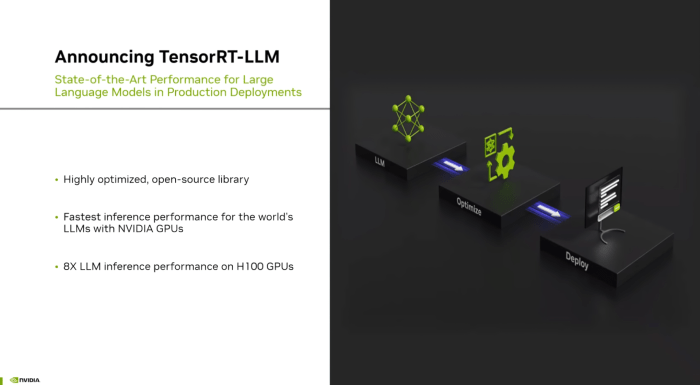

NVIDIA TensorRT-LLM Announcement

NVIDIA’s recent announcement of TensorRT-LLM marks a significant milestone in the advancement of large language models (LLMs) and their deployment in real-world applications. This new software library, designed to accelerate and optimize LLM inference, promises to revolutionize the way we interact with AI, opening doors to more efficient and powerful AI solutions.

Nvidia’s announcement of TensorRT-LLM is exciting news for the AI world, promising faster and more efficient large language models. However, this advancement comes at a time when cybersecurity threats are escalating, as highlighted by the recent Akamai report detailing the expansion of Lockbit and Cl0p ransomware efforts.

This underscores the need for robust security measures alongside advancements in AI technology, ensuring that the benefits of TensorRT-LLM are realized without compromising data integrity and security.

TensorRT-LLM Features and Capabilities

TensorRT-LLM is a highly optimized inference engine specifically tailored for LLMs. It leverages NVIDIA’s expertise in high-performance computing and deep learning to deliver substantial performance improvements. Here are some of its key features and capabilities:

- Optimized for LLMs:TensorRT-LLM is designed specifically for LLMs, taking into account their unique characteristics and computational demands. It employs techniques like graph optimization and kernel fusion to maximize inference speed and efficiency.

- Performance Enhancements:TensorRT-LLM delivers significant performance gains, enabling faster inference times and reduced latency. This allows for real-time interactions with LLMs, making them more responsive and user-friendly.

- Reduced Resource Consumption:By optimizing inference, TensorRT-LLM reduces the computational resources required to run LLMs. This translates to lower power consumption and reduced costs, making LLMs more accessible for a wider range of applications.

- Simplified Deployment:TensorRT-LLM simplifies the deployment of LLMs by providing a streamlined workflow and pre-optimized models. This reduces the time and effort required to get LLMs up and running, enabling faster development cycles and quicker time to market.

Potential Impact of TensorRT-LLM on the AI Landscape

TensorRT-LLM has the potential to significantly impact the AI and deep learning landscape, driving innovation and accelerating the adoption of LLMs in various domains.

- Wider Accessibility of LLMs:TensorRT-LLM’s performance enhancements and reduced resource requirements make LLMs more accessible to a wider range of users and developers. This could lead to the development of new and innovative applications that leverage the power of LLMs.

- Enhanced User Experiences:Faster inference times and reduced latency enable more responsive and engaging user experiences with AI-powered applications. This could revolutionize the way we interact with AI, making it more seamless and intuitive.

- New Applications and Use Cases:The increased efficiency and accessibility of LLMs made possible by TensorRT-LLM could unlock new applications and use cases in various industries. For example, LLMs could be deployed in real-time applications like customer service chatbots, personalized recommendations, and medical diagnosis.

TensorRT-LLM

NVIDIA TensorRT-LLM is a groundbreaking software library specifically designed to accelerate the deployment and optimization of large language models (LLMs) on NVIDIA GPUs. This library leverages NVIDIA’s TensorRT inference engine, which is renowned for its high-performance capabilities, to significantly enhance the speed and efficiency of LLM execution.

Nvidia’s announcement of TensorRT-LLM, a powerful inference engine for large language models, is exciting news for the AI community. But as we embrace these advancements, it’s crucial to remember the potential vulnerabilities they introduce. Social engineering attacks, which often rely on manipulation and deception, are a growing concern, especially as AI becomes more integrated into our lives.

Understanding the 6 persuasion tactics used in social engineering attacks can help us stay vigilant and protect ourselves from these threats. By recognizing these tactics, we can better safeguard our data and ensure the responsible development and deployment of AI technologies like TensorRT-LLM.

TensorRT-LLM Architecture and Design

TensorRT-LLM is built upon a sophisticated architecture that combines the power of TensorRT with specialized optimizations for LLMs. It utilizes a combination of techniques to achieve optimal performance:

- TensorRT Engine Optimization:TensorRT-LLM leverages TensorRT’s engine optimization capabilities to convert LLM models into highly optimized execution graphs. This process involves fusing operations, applying kernel selection, and implementing memory layout optimization for maximum efficiency.

- Layer Fusion:TensorRT-LLM intelligently fuses multiple LLM layers into single, optimized operations, reducing the number of kernel launches and memory transfers, thereby enhancing performance.

- Kernel Selection:TensorRT-LLM intelligently selects the most appropriate kernels for each LLM operation, ensuring optimal performance based on the specific hardware and model characteristics.

- Memory Optimization:TensorRT-LLM optimizes memory allocation and management, reducing memory footprint and improving data locality for faster data access.

Benefits of Using TensorRT-LLM

TensorRT-LLM offers a wide range of benefits for deploying and optimizing LLMs, leading to improved performance, reduced latency, and enhanced efficiency:

- Performance Acceleration:TensorRT-LLM significantly accelerates LLM inference, enabling faster response times and improved user experience. It achieves this by optimizing model execution on NVIDIA GPUs, taking advantage of their parallel processing capabilities.

- Reduced Latency:By streamlining LLM inference, TensorRT-LLM minimizes latency, allowing for near real-time responses, which is crucial for applications requiring low latency, such as conversational AI and real-time translation.

- Enhanced Efficiency:TensorRT-LLM optimizes memory usage and reduces computational overhead, leading to improved resource utilization and lower power consumption. This efficiency is particularly important for deploying LLMs on resource-constrained devices or in cloud environments.

- Simplified Deployment:TensorRT-LLM simplifies the deployment of LLMs by providing a unified framework for optimization and execution, reducing the complexity of setting up and managing LLM inference pipelines.

Performance Improvements and Efficiency Gains

TensorRT-LLM delivers significant performance improvements and efficiency gains compared to traditional LLM inference methods:

- Inference Speed:TensorRT-LLM can achieve up to 10x faster inference speeds compared to CPU-based inference, enabling real-time applications and reducing response times.

- Memory Footprint:TensorRT-LLM optimizes memory usage, reducing the memory footprint of LLMs by up to 50%, enabling deployment on devices with limited memory resources.

- Power Efficiency:By optimizing computations and memory management, TensorRT-LLM reduces power consumption, making it ideal for deploying LLMs on mobile devices and other power-sensitive platforms.

Use Cases and Applications

TensorRT-LLM opens up a vast array of possibilities across various industries by enabling efficient and powerful large language model deployment. Its ability to optimize LLM inference performance on NVIDIA GPUs unlocks new avenues for leveraging the transformative power of AI in real-world applications.

Diverse Use Cases Across Industries

TensorRT-LLM’s versatility makes it suitable for a wide range of use cases across diverse industries. The following table showcases some prominent examples:

| Industry | Use Case | Benefits |

|---|---|---|

| Healthcare | Medical Diagnosis Assistance | Faster and more accurate diagnosis through natural language processing of patient records and medical literature. |

| Finance | Fraud Detection and Risk Assessment | Enhanced fraud detection and risk assessment by analyzing large volumes of financial data and identifying patterns indicative of suspicious activity. |

| Retail | Personalized Customer Service | Providing personalized customer service through chatbots powered by LLMs that can understand customer queries and provide relevant responses. |

| Education | Personalized Learning Experiences | Creating personalized learning experiences by adapting educational content and providing tailored feedback based on student needs and progress. |

| Manufacturing | Predictive Maintenance and Quality Control | Improving predictive maintenance and quality control by analyzing sensor data and identifying potential issues before they occur. |

Natural Language Processing Tasks, Nvidia announces tensorrt llm

TensorRT-LLM can be effectively utilized in various natural language processing tasks, including:

Text Generation

TensorRT-LLM enables the generation of high-quality text, such as articles, stories, and summaries, by leveraging its understanding of language patterns and structures.

Translation

By optimizing the inference of translation models, TensorRT-LLM allows for fast and accurate translation between languages, facilitating global communication and information exchange.

Question Answering

TensorRT-LLM facilitates the development of question answering systems that can understand and respond to complex queries, providing accurate and relevant information.

Real-World Scenario

Consider a scenario where a customer service chatbot is deployed in a retail setting. The chatbot utilizes TensorRT-LLM to power its natural language understanding capabilities. When a customer asks a question about a product, the chatbot uses TensorRT-LLM to process the query, understand the customer’s intent, and provide a relevant response.

This process is optimized for efficiency and speed, ensuring a seamless and satisfying customer experience.

Nvidia’s announcement of TensorRT-LLM is huge news for AI development, promising faster and more efficient large language models. As startups build and refine their AI-powered products, efficient project management is crucial. Finding the right project management software for startups can help streamline workflows and ensure projects stay on track.

With TensorRT-LLM’s potential to accelerate AI development, we can expect to see even more innovative AI solutions emerge from the startup scene.

Comparison with Existing Solutions: Nvidia Announces Tensorrt Llm

TensorRT-LLM, a specialized library designed for optimizing and deploying large language models (LLMs) on NVIDIA GPUs, stands out among other frameworks and libraries for its focus on performance and efficiency. It leverages NVIDIA’s TensorRT technology to accelerate inference, making it a compelling choice for real-time applications and production environments.

TensorRT-LLM’s Key Differentiators

TensorRT-LLM offers several key differentiators that set it apart from other frameworks:

- Optimized for NVIDIA GPUs:TensorRT-LLM is designed specifically for NVIDIA GPUs, leveraging their hardware capabilities to achieve significant performance gains. It optimizes the execution of LLMs on NVIDIA GPUs, resulting in faster inference speeds and lower latency.

- High Throughput and Low Latency:By leveraging TensorRT’s capabilities, TensorRT-LLM can achieve high throughput and low latency, enabling real-time applications like conversational AI and machine translation. This makes it suitable for scenarios where speed and responsiveness are critical.

- Support for Diverse LLM Architectures:TensorRT-LLM supports various LLM architectures, including Transformer-based models like BERT, GPT-3, and others. This flexibility allows users to optimize and deploy a wide range of LLMs.

- Ease of Use and Integration:TensorRT-LLM is designed to be user-friendly and integrates seamlessly with popular deep learning frameworks like PyTorch and TensorFlow. This simplifies the process of deploying LLMs and reduces development time.

TensorRT-LLM’s Potential Limitations

While TensorRT-LLM offers significant advantages, it also has some potential limitations:

- Dependence on NVIDIA GPUs:TensorRT-LLM’s performance benefits are tied to NVIDIA GPUs. Users without access to NVIDIA GPUs might not be able to fully leverage its capabilities. The availability of NVIDIA GPUs can be a factor for some users.

- Limited Support for Other Hardware:TensorRT-LLM’s primary focus is on NVIDIA GPUs, and support for other hardware platforms might be limited. This restricts its applicability to scenarios where other hardware is preferred or necessary.

- Potential for Compatibility Issues:As a specialized library, TensorRT-LLM might encounter compatibility issues with certain LLM architectures or frameworks. Users need to ensure that their LLMs and frameworks are compatible with TensorRT-LLM to avoid potential problems.

Future Implications and Potential

The introduction of TensorRT-LLM marks a significant milestone in the field of artificial intelligence, opening up a world of possibilities for faster, more efficient, and accessible large language models. The potential impact of TensorRT-LLM extends far beyond its immediate use cases, promising to revolutionize various industries and shape the future of AI.

Accelerated AI Deployment and Democratization

TensorRT-LLM’s ability to significantly accelerate inference speeds makes it possible to deploy large language models on edge devices and in resource-constrained environments. This opens up opportunities for AI applications that were previously impossible, such as real-time natural language processing on mobile devices, enabling faster and more efficient customer service chatbots, personalized language assistants, and interactive AI experiences.

The democratization of AI powered by TensorRT-LLM empowers developers and researchers to explore and experiment with large language models without requiring extensive computational resources, fostering innovation and wider adoption of AI technologies.

Enhanced User Experiences and Personalized Interactions

TensorRT-LLM’s optimized performance allows for the creation of more sophisticated and engaging AI-powered applications that can understand and respond to user input in a more natural and human-like way. This leads to enhanced user experiences, enabling personalized recommendations, intelligent search, and conversational AI agents that can provide tailored assistance and support.

New Applications and Opportunities

The speed and efficiency of TensorRT-LLM unlock new possibilities for AI applications in various industries. For instance, in healthcare, it can be used to accelerate medical image analysis, enabling faster diagnosis and treatment. In finance, TensorRT-LLM can power real-time fraud detection and risk assessment systems.

In education, it can personalize learning experiences and provide customized support to students. The possibilities are vast and continue to expand as researchers and developers explore the potential of TensorRT-LLM.