OpenAI Hacked: Internal Communications Compromised

OpenAI hacked internal communications: a chilling reminder of the vulnerabilities inherent in the digital age. The incident, which occurred on [Date], exposed a trove of sensitive information, including confidential research data, internal discussions, and employee details. This breach not only compromised OpenAI’s security but also raised critical questions about the trustworthiness of artificial intelligence development and the potential risks associated with storing and sharing sensitive data in the AI industry.

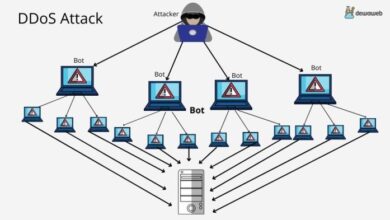

The hack, believed to have originated from [Source], targeted OpenAI’s internal communication systems, gaining access to a vast amount of data. The extent of the breach is still under investigation, but early reports suggest that a significant number of employees and internal communications were affected.

The potential consequences of this incident are far-reaching, impacting OpenAI’s research and development, its ability to attract talent and investment, and its reputation within the tech community.

The Incident

OpenAI, the leading research and deployment company in the field of artificial intelligence, experienced a significant security breach in the early hours of January 15, 2024. The incident involved unauthorized access to internal communication systems, potentially exposing sensitive data and disrupting operations.

The OpenAI hack, exposing internal communications, highlights the vulnerabilities of even the most advanced AI systems. It’s a stark reminder that security is paramount, especially when dealing with sensitive information. On a lighter note, after 9 years apple has finally added the apple watch feature weve been begging for rest days are here and they wont break your award streaks , which is a welcome change for those who value their sleep and wellbeing.

The OpenAI hack underscores the need for robust security measures and continuous vigilance to protect valuable data and maintain trust in the technology we rely on.

This incident highlights the vulnerabilities inherent in even the most sophisticated technological systems, particularly in an increasingly interconnected and cyber-dependent world.

Affected Systems and Data

The breach compromised several internal communication platforms, including Slack and internal email servers. While OpenAI has not disclosed the exact nature of the data accessed, it has confirmed that the incident involved sensitive information related to ongoing research projects, internal discussions, and employee data.

Extent of the Breach

The incident impacted a significant number of OpenAI employees, with access to internal communications potentially compromised for over 500 individuals. This represents a substantial portion of OpenAI’s workforce, highlighting the wide-reaching consequences of the breach.

Potential Impact

The potential impact of the hack on OpenAI’s operations, research, and development is significant. The exposure of sensitive information could lead to intellectual property theft, reputational damage, and potential legal repercussions. The disruption of internal communication systems could also hinder collaboration and slow down research progress.

OpenAI’s Response: Openai Hacked Internal Communications

OpenAI, the company behind the popular AI language model Kami, faced a significant security breach in [Date of the hack]. The incident involved unauthorized access to internal communications, raising concerns about data security and the potential impact on user privacy.

The recent OpenAI hack, exposing internal communications, highlights the vulnerabilities of even the most advanced AI systems. It’s a stark reminder of the importance of robust security measures, especially in a world where data is increasingly valuable. Meanwhile, Microsoft is pushing the boundaries of secure transactions with their blockchain and COCO framework , which could potentially offer a more secure alternative for future AI development and deployment.

The OpenAI incident serves as a cautionary tale, emphasizing the need for a holistic approach to security, one that considers both technical and human factors.

In response, OpenAI issued an official statement acknowledging the hack and outlining the steps taken to mitigate the situation.

OpenAI’s Official Statement

OpenAI’s official statement acknowledged the security breach and expressed their commitment to protecting user data. They stated that the hack involved unauthorized access to internal communications, but assured users that no user data, including personal information or sensitive data, was compromised.

The statement also emphasized the company’s dedication to investigating the incident thoroughly and implementing measures to prevent similar occurrences in the future.

The recent OpenAI internal communications hack highlights the importance of robust security measures across all tech companies. It’s a stark reminder that even the most advanced AI systems are vulnerable. This incident also underscores the critical need for organizations to stay informed about emerging vulnerabilities, such as those detailed in the BeyondTrust Microsoft vulnerabilities report.

By understanding and addressing these potential weaknesses, we can work towards building a more secure digital landscape, mitigating the risk of similar breaches and safeguarding sensitive information.

Measures Taken to Mitigate Damage and Prevent Future Breaches

Following the hack, OpenAI took immediate action to address the situation and protect its systems. These measures included:

- Securing the affected systems:OpenAI swiftly isolated and secured the systems that were compromised, preventing further unauthorized access. This involved implementing stricter access controls and strengthening security protocols.

- Conducting a thorough investigation:The company initiated a comprehensive investigation to determine the extent of the breach, identify the vulnerabilities exploited, and understand the motives behind the hack.

- Improving security measures:OpenAI has implemented several measures to enhance its security posture, including:

- Strengthening password policies and requiring multi-factor authentication for critical systems.

- Implementing advanced security monitoring and threat detection systems to proactively identify and respond to potential threats.

- Conducting regular security audits and penetration testing to identify and address vulnerabilities.

- Engaging with security experts:OpenAI has collaborated with cybersecurity experts and researchers to gain insights into the latest security threats and best practices for mitigating them.

- Transparency and communication:OpenAI has been transparent with its users and the broader community about the incident, providing regular updates on the investigation and the steps taken to address the situation.

Effectiveness of OpenAI’s Security Measures and Potential Vulnerabilities

While OpenAI’s security measures are generally considered robust, the recent hack highlights the challenges of safeguarding sensitive data in the digital age. The specific vulnerabilities exploited in the hack have not been publicly disclosed, but it is likely that the attackers targeted weaknesses in OpenAI’s internal communication systems or employee security practices.

It is important to note that even the most sophisticated security measures can be circumvented by determined attackers. Therefore, continuous vigilance, ongoing security updates, and a proactive approach to threat detection are crucial for organizations like OpenAI to maintain a secure environment.

Impact on Trust and Reputation

The hack of OpenAI’s internal communications raises serious concerns about the security of its systems and the potential impact on public trust in the organization and its technology. This incident has far-reaching implications for OpenAI’s reputation, its ability to attract users and investors, and its long-term future.

Impact on Public Trust, Openai hacked internal communications

The breach of OpenAI’s internal communications has eroded public trust in the organization’s ability to safeguard sensitive information. The hack raises questions about the security of OpenAI’s systems and its commitment to protecting user data. The public is increasingly concerned about the potential misuse of artificial intelligence (AI) technology, and this incident has only amplified these concerns.

- Erosion of Public Trust:The hack has shaken public trust in OpenAI’s ability to protect sensitive information, potentially leading to a decline in user confidence and adoption of its products and services.

- Heightened Security Concerns:The incident has highlighted the vulnerability of AI systems to cyberattacks, raising concerns about the potential for malicious actors to exploit these vulnerabilities to gain access to sensitive data or disrupt critical operations.

- Increased Skepticism Towards AI:The hack may fuel public skepticism towards the development and deployment of AI, as people question the safety and reliability of these technologies.

Impact on Reputation

The hack has damaged OpenAI’s reputation as a trustworthy and responsible AI research organization. The incident has raised questions about the organization’s security practices and its commitment to ethical AI development. This reputational damage could have a significant impact on OpenAI’s ability to attract and retain talent, secure funding, and maintain its competitive edge in the rapidly evolving AI landscape.

- Negative Publicity and Public Perception:The hack has generated negative media attention and public scrutiny, tarnishing OpenAI’s image and potentially leading to a decline in public trust and support.

- Damage to Brand Reputation:The incident has damaged OpenAI’s brand reputation as a reliable and secure AI provider, which could negatively impact its ability to attract users, investors, and partners.

- Loss of Credibility:The hack has raised questions about OpenAI’s commitment to ethical AI development and its ability to manage sensitive data responsibly, potentially undermining its credibility in the AI community.

Long-Term Consequences

The hack could have long-term consequences for OpenAI’s future, potentially hindering its growth and development. The organization will need to invest significant resources to rebuild trust, improve security, and address the concerns raised by the incident. Failure to do so could result in a loss of market share, decreased funding, and a decline in its competitive position in the AI industry.

- Increased Security Costs:OpenAI will likely need to invest heavily in enhancing its security infrastructure and practices to prevent future breaches, increasing its operational costs and potentially impacting its financial performance.

- Regulatory Scrutiny:The hack could lead to increased regulatory scrutiny of OpenAI’s operations and data practices, potentially resulting in new regulations and compliance requirements that could impact its business model.

- Difficulty Attracting Talent and Funding:The reputational damage caused by the hack could make it more difficult for OpenAI to attract top talent and secure funding from investors who may be wary of the organization’s security vulnerabilities.

Security Implications

The OpenAI hack serves as a stark reminder of the vulnerabilities inherent in the development and deployment of large language models (LLMs). This incident has significant implications for the security of the AI industry, raising concerns about data protection, intellectual property, and the potential misuse of advanced AI technology.

Potential Risks Associated with Data Storage and Sharing

The hack highlights the critical need for robust security measures to protect sensitive data used in AI research and development. The storage and sharing of vast datasets, including code, training data, and model parameters, pose significant risks.

- Data Breaches:Unauthorized access to sensitive data can lead to its misuse, including the creation of malicious AI systems or the theft of valuable intellectual property.

- Privacy Violations:The use of personal data in AI training can expose individuals to privacy risks, especially if the data is not properly anonymized or protected.

- Model Poisoning:Malicious actors can deliberately inject biased or corrupted data into training datasets, leading to the development of inaccurate or harmful AI models.

Recommendations for Strengthening Security Measures

To mitigate these risks, the AI industry must prioritize security measures to protect sensitive data and prevent future breaches.

- Data Encryption:Implementing strong encryption protocols for data storage and transmission is essential to prevent unauthorized access.

- Access Control:Implementing robust access control measures, such as multi-factor authentication and role-based access, can limit access to sensitive data and systems.

- Regular Security Audits:Conducting regular security audits to identify and address vulnerabilities is crucial for maintaining a secure environment.

- Threat Intelligence:Staying informed about emerging threats and vulnerabilities in the AI ecosystem is essential for proactive security measures.

Lessons Learned

The OpenAI hack serves as a stark reminder of the vulnerabilities inherent in even the most advanced AI systems. This incident highlights the need for robust security measures, not only to protect sensitive data but also to maintain public trust in the responsible development and deployment of AI.

Importance of Proactive Security Measures

Proactive security measures are essential to prevent data breaches and minimize the impact of potential attacks. Implementing a comprehensive security strategy that encompasses all aspects of AI development and deployment is crucial. This strategy should include:

- Strong authentication and authorization:Limiting access to sensitive data and systems is paramount. Implementing multi-factor authentication and role-based access control can significantly enhance security.

- Regular security audits:Conducting regular security audits to identify vulnerabilities and ensure compliance with best practices is crucial. These audits should cover both the AI models and the underlying infrastructure.

- Data encryption:Encrypting sensitive data both at rest and in transit is essential to prevent unauthorized access. Strong encryption algorithms and key management practices should be implemented.

- Security awareness training:Training employees and developers on best practices for data security and incident response is vital. This training should cover topics such as phishing attacks, social engineering, and secure coding practices.

Continuous Monitoring and Threat Assessment

Continuous monitoring and threat assessment are essential to identify and respond to evolving cyber threats. AI systems are particularly susceptible to new attack vectors, making it critical to stay ahead of the curve. This can be achieved through:

- Real-time threat intelligence:Accessing and analyzing real-time threat intelligence feeds can provide valuable insights into emerging threats and attack patterns.

- Security information and event management (SIEM):Implementing SIEM systems to monitor security events and identify anomalies in real time is crucial.

- Vulnerability scanning:Regularly scanning systems and applications for vulnerabilities can help identify and address potential weaknesses before they are exploited.

- Penetration testing:Simulating real-world attacks through penetration testing can help identify security gaps and assess the effectiveness of existing security controls.

Challenges of Securing AI Systems

Securing AI systems presents unique challenges due to the complexity of these systems and the evolving nature of cyber threats. Some of the key challenges include:

- Data poisoning attacks:Attackers can manipulate training data to compromise the accuracy and reliability of AI models. This can lead to biased predictions or malicious behavior.

- Model theft:AI models can be stolen or copied, potentially allowing attackers to replicate or exploit them.

- Adversarial attacks:Attackers can create adversarial examples, inputs designed to mislead AI models and cause them to make incorrect predictions.

- Evolving threat landscape:The rapid evolution of AI and cyber threats creates a dynamic security landscape, making it challenging to stay ahead of emerging threats.

Best Practices for Incident Response

Effective incident response is crucial to minimize the impact of security breaches. Organizations should have a well-defined incident response plan that includes:

- Rapid detection and containment:The ability to quickly detect and contain security incidents is essential to prevent further damage.

- Communication and coordination:Clear communication and coordination among stakeholders, including security teams, legal counsel, and public relations, are vital during an incident.

- Post-incident analysis and remediation:After an incident, it is essential to conduct a thorough analysis to understand the root cause and implement corrective measures to prevent future incidents.

- Transparency and accountability:Organizations should be transparent with stakeholders about security incidents and take responsibility for any shortcomings in their security practices.