Nvidia Equinix: Powering Generative AI in Data Centers

Nvidia Equinix generative AI stack data centers are at the forefront of the AI revolution, providing a powerful platform for developing and deploying cutting-edge generative AI models. This collaboration leverages Nvidia’s industry-leading GPUs and software solutions, coupled with Equinix’s robust data center infrastructure, to create a seamless environment for high-performance AI workloads.

The combination of Nvidia’s hardware and software, including their CUDA and cuDNN libraries, delivers exceptional performance and efficiency for AI tasks. Equinix’s global network of data centers offers unparalleled connectivity, bandwidth, and security, ensuring reliable access and scalability for generative AI applications.

Together, they offer a comprehensive solution for businesses seeking to harness the power of generative AI to drive innovation and growth.

Nvidia’s Role in Generative AI

Nvidia has emerged as a pivotal player in the rapid advancement of generative AI, providing the essential computational muscle and software tools that power the development and deployment of these transformative technologies.

Nvidia’s GPUs: The Powerhouse of Generative AI

Nvidia’s Graphics Processing Units (GPUs) are specifically designed to handle massive parallel computations, making them ideally suited for the complex and demanding tasks involved in training and running generative AI models. The architecture of these GPUs, with their thousands of cores, allows them to process vast amounts of data simultaneously, accelerating the training process significantly.

This parallel processing capability is crucial for generative AI models, which often require weeks or even months of training on massive datasets.

Equinix’s Data Center Infrastructure: Nvidia Equinix Generative Ai Stack Data Centers

Equinix is a leading global provider of data center and interconnection services. Its data centers are strategically located in major business hubs around the world, offering a robust infrastructure for hosting demanding workloads like generative AI. Equinix’s data centers are specifically designed to cater to the unique requirements of AI applications, offering a combination of high-performance computing, advanced connectivity, and a global reach.

Equinix Data Center Features for Generative AI

Equinix’s data centers offer several key features that make them suitable for hosting generative AI workloads:

- High-Performance Computing (HPC):Equinix data centers provide access to a wide range of high-performance computing resources, including powerful servers, GPUs, and specialized AI accelerators. These resources are essential for training and deploying large-scale generative AI models, which require significant computational power.

- Scalability and Flexibility:Equinix’s data centers are designed to be scalable and flexible, allowing organizations to easily adjust their computing resources based on their needs. This is crucial for generative AI workloads, which can vary significantly in terms of computational demands.

- Data Security and Reliability:Equinix data centers prioritize data security and reliability, employing robust physical security measures, advanced access control systems, and comprehensive monitoring to protect sensitive AI data. These measures are critical for ensuring the integrity and confidentiality of AI models and their training data.

- Energy Efficiency:Equinix data centers are designed with energy efficiency in mind, utilizing advanced cooling technologies and power management systems to minimize energy consumption. This is essential for reducing operational costs and minimizing environmental impact, especially for energy-intensive generative AI workloads.

Network Connectivity and Bandwidth, Nvidia equinix generative ai stack data centers

Equinix’s data centers are renowned for their exceptional network connectivity and bandwidth capabilities, which are crucial for AI workloads.

Nvidia’s Equinix generative AI stack data centers are truly impressive, offering cutting-edge technology for developers and businesses alike. While I’m not sure how much of a role these data centers play in the live let loose event ipads apple pencils more , it’s clear that Nvidia’s commitment to AI innovation is shaping the future of data processing and analysis.

The power of these data centers is evident in the incredible strides being made in AI, particularly in the realm of generative models.

- High-Speed Interconnections:Equinix offers high-speed interconnections between its data centers, allowing for seamless data transfer and collaboration between AI models and other systems. This is essential for enabling distributed AI training and inference, where models can be trained and deployed across multiple data centers.

- Diverse Network Providers:Equinix has partnerships with numerous network providers, offering a wide range of connectivity options to meet the specific needs of AI applications. This diversity ensures high availability and redundancy, minimizing the risk of network outages.

- Low Latency:Equinix’s data centers are strategically located in major business hubs, minimizing latency for data transmission and processing. This is critical for real-time AI applications, such as chatbots and recommendation systems, where low latency is essential for delivering a seamless user experience.

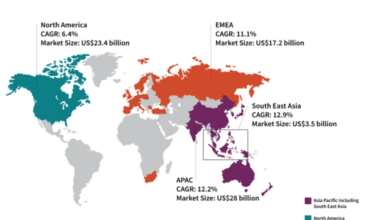

Global Presence for AI Deployment and Access

Equinix’s global presence is a significant advantage for deploying and accessing AI applications.

- Strategic Locations:Equinix has data centers in over 60 cities across the globe, strategically located in major business hubs and technology centers. This global footprint enables organizations to deploy AI applications closer to their users, reducing latency and improving performance.

- Data Sovereignty:Equinix’s data centers comply with local data sovereignty regulations, allowing organizations to deploy AI applications in accordance with regional data privacy laws. This is crucial for ensuring compliance and avoiding potential legal issues.

- Interconnection Ecosystem:Equinix’s data centers are home to a vast interconnection ecosystem, connecting businesses, cloud providers, and other technology partners. This ecosystem facilitates collaboration and data sharing, enabling organizations to access and leverage AI resources and expertise from around the world.

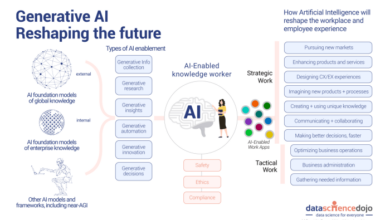

Generative AI Stack

Generative AI is a powerful technology with the potential to revolutionize many industries. To understand how generative AI works, it’s important to understand the components that make up a typical generative AI stack. The generative AI stack consists of hardware, software, and data.

These components work together to enable the creation of new content, including text, images, audio, and video.

Hardware

The hardware component of a generative AI stack includes the computing resources necessary to train and run generative AI models. These resources can range from high-performance computing clusters to specialized AI accelerators, such as GPUs and TPUs.

The choice of hardware depends on the size and complexity of the generative AI model being used, as well as the volume of data being processed.

Software

The software component of a generative AI stack includes the tools and frameworks used to develop, train, and deploy generative AI models. These tools include:

- Deep learning frameworks:These frameworks provide the building blocks for creating and training deep learning models, which are the foundation of most generative AI models. Popular deep learning frameworks include TensorFlow, PyTorch, and Keras.

- Generative AI libraries:These libraries provide specialized tools and algorithms for building and training different types of generative AI models. Some popular generative AI libraries include:

- Generative Adversarial Networks (GANs):GANs are a type of deep learning model that are used to generate realistic data, such as images and text.

Examples of GANs include:

- StyleGAN:This GAN is used to generate high-quality images, including portraits, landscapes, and abstract art.

- BigGAN:This GAN is known for generating images with high resolution and diversity.

- Variational Autoencoders (VAEs):VAEs are another type of generative model that are used to learn the underlying distribution of data. VAEs are often used for tasks such as image generation and anomaly detection.

- Diffusion models:Diffusion models are a newer type of generative model that have shown promising results in generating high-quality images and text. Examples of diffusion models include:

- DALL-E 2:This model can generate images from text descriptions.

- Stable Diffusion:This model is an open-source diffusion model that can generate images from text prompts.

- Transformer models:Transformer models are a type of neural network that are particularly well-suited for processing sequential data, such as text. Transformer models are used in various generative AI applications, including text generation, machine translation, and chatbot development.

- Generative Adversarial Networks (GANs):GANs are a type of deep learning model that are used to generate realistic data, such as images and text.

- Model training tools:These tools help with training generative AI models, including tools for data preprocessing, model optimization, and performance evaluation.

- Model deployment tools:These tools help with deploying trained generative AI models into production environments, allowing them to be used for real-world applications.

Data

Data is the fuel for generative AI models. The quality and quantity of data used to train a generative AI model significantly impact the model’s performance and capabilities. Generative AI models require large amounts of data to learn the underlying patterns and relationships in the data.

Nvidia’s Equinix Generative AI Stack data centers are a game-changer for businesses looking to harness the power of AI. These centers provide the necessary infrastructure and resources for deploying and scaling AI applications, and they’re also equipped with advanced security features to protect sensitive data.

But sometimes, even with all the power of AI, we need to work with simple tasks like organizing text lists, which is where new Excel features come in. These new features streamline list management, saving time and effort. By leveraging both the power of AI and efficient tools like Excel, we can truly maximize our productivity and unlock the full potential of our data.

The type of data used depends on the specific application. For example, a generative AI model used for image generation would require a large dataset of images, while a model used for text generation would require a large dataset of text.

Integration of Nvidia and Equinix

The partnership between Nvidia and Equinix is a powerful force in the generative AI landscape. It brings together Nvidia’s leading-edge AI hardware and software with Equinix’s global data center infrastructure, creating a robust ecosystem for developing and deploying generative AI applications.

This collaboration empowers businesses to access the computational power and connectivity they need to harness the potential of generative AI.

Examples of Nvidia Technology Deployment in Equinix Data Centers

Nvidia’s technology is widely deployed within Equinix data centers, enabling businesses to leverage the power of generative AI. Here are some key examples:

- Nvidia DGX Systems:Equinix offers access to Nvidia DGX systems, powerful AI supercomputers designed for training and deploying large language models. These systems provide the necessary computational horsepower to handle the demanding workloads associated with generative AI.

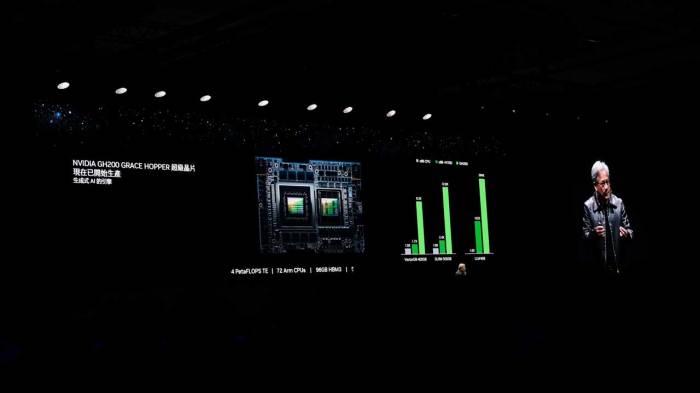

- Nvidia GPUs:Equinix data centers are equipped with a wide range of Nvidia GPUs, including the A100 and H100, which are optimized for AI workloads. These GPUs provide the parallel processing capabilities required for training and inference in generative AI applications.

- Nvidia AI Enterprise:Equinix offers access to Nvidia AI Enterprise, a comprehensive software suite that provides tools for building, deploying, and managing generative AI applications. This suite includes frameworks, libraries, and tools for optimizing AI performance and scalability.

Benefits of a Combined Nvidia-Equinix Solution

The combination of Nvidia’s AI technology and Equinix’s data center infrastructure offers significant benefits for businesses seeking to develop and deploy generative AI solutions.

- High Performance Computing:Nvidia’s DGX systems and GPUs provide the necessary computational power to train and deploy large language models, enabling businesses to achieve high performance and efficiency in their generative AI applications.

- Global Reach and Connectivity:Equinix’s global data center network provides businesses with access to a vast ecosystem of partners, customers, and cloud providers. This enables businesses to connect their generative AI applications to the data and resources they need, regardless of location.

- Scalability and Flexibility:Equinix’s data centers offer a range of deployment options, from bare metal to cloud-based solutions, allowing businesses to scale their generative AI infrastructure as needed. This flexibility ensures that businesses can adapt to changing demands and workloads.

- Security and Reliability:Equinix data centers are designed with security and reliability in mind, providing businesses with a safe and secure environment for their generative AI applications. This is crucial for protecting sensitive data and ensuring business continuity.

Use Cases and Applications

The Nvidia-Equinix Generative AI stack is not just a powerful technology; it’s a gateway to a new era of innovation across diverse industries. Its capabilities extend far beyond theoretical possibilities, impacting real-world applications and transforming business processes.

Examples of Generative AI Applications

Generative AI, powered by the Nvidia-Equinix stack, is already making its mark in various industries, revolutionizing how businesses operate and interact with their customers.

- Healthcare:Generative AI can create synthetic medical images for training AI models, improving diagnostic accuracy. It can also personalize treatment plans based on patient data, enhancing care delivery.

- Finance:Generative AI is used in fraud detection by identifying patterns in financial transactions. It can also create personalized financial advice and generate reports based on complex financial data.

- Marketing:Generative AI can create personalized marketing campaigns, write engaging content, and generate high-quality images and videos for social media, leading to increased brand engagement.

- Manufacturing:Generative AI can optimize manufacturing processes by predicting equipment failures and designing new products based on customer preferences, leading to increased efficiency and reduced costs.

Industries and Their Specific Use Cases

The Nvidia-Equinix stack empowers industries to leverage generative AI in ways that were previously unimaginable.

| Industry | Use Cases |

|---|---|

| Healthcare | Drug discovery, personalized medicine, medical imaging analysis |

| Finance | Fraud detection, risk assessment, personalized financial advice |

| Retail | Personalized product recommendations, chatbot development, visual search |

| Education | Personalized learning experiences, automated content creation, AI-powered tutors |

| Manufacturing | Product design optimization, predictive maintenance, supply chain management |

Enhancing Business Processes, Creating New Products, and Driving Innovation

Generative AI is not just about automating tasks; it’s about transforming business processes, creating new products, and driving innovation.

Nvidia’s Equinix generative AI stack data centers are a powerhouse of innovation, offering the processing power and infrastructure needed to fuel the next generation of AI applications. But with such powerful systems, security is paramount. When it comes to two-factor authentication, many developers are debating the best option – authy vs google authenticator.

Ultimately, the choice depends on your specific needs and preferences, but both options are crucial for safeguarding access to these vital data centers.

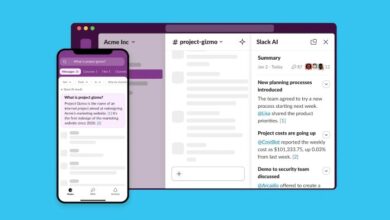

- Enhanced Business Processes:Generative AI can automate repetitive tasks, allowing employees to focus on more strategic initiatives. It can also analyze large datasets to identify trends and insights, improving decision-making.

- New Product Creation:Generative AI can create new products and services based on customer preferences and market trends. It can also design prototypes and test them virtually, reducing time and cost associated with traditional product development.

- Driving Innovation:Generative AI can help businesses explore new ideas and solutions by generating creative content, designing new products, and simulating different scenarios. It can also identify emerging trends and opportunities, fostering innovation and growth.

Challenges and Considerations

Deploying and scaling generative AI models in data centers present significant challenges, and ethical considerations are paramount in leveraging this technology. Understanding these complexities and implementing mitigation strategies is crucial for responsible and successful AI implementation.

Deployment and Scaling Challenges

Deploying and scaling generative AI models in data centers require careful planning and consideration of various factors.

- Computational Resources:Generative AI models are computationally intensive, requiring substantial processing power, memory, and storage. The need for specialized hardware, such as GPUs, and the cost of maintaining these resources can be a major obstacle for organizations.

- Data Requirements:These models are data-hungry and require large datasets for training and fine-tuning. Acquiring, cleaning, and managing such datasets can be time-consuming and expensive.

- Model Complexity:The intricate architectures of generative AI models can be challenging to design, train, and optimize. This requires specialized expertise and the availability of skilled personnel.

- Infrastructure Optimization:Integrating generative AI models into existing data center infrastructure requires careful planning and optimization to ensure seamless operation and performance.

Ethical Considerations and Risks

The use of generative AI raises ethical concerns and potential risks that need to be carefully addressed.

- Bias and Discrimination:Generative AI models can perpetuate biases present in the training data, leading to discriminatory outputs. It is crucial to ensure that the training data is diverse and representative to mitigate this risk.

- Misinformation and Deepfakes:Generative AI can be used to create realistic synthetic content, including images, videos, and audio, that can be used for malicious purposes, such as spreading misinformation or creating deepfakes.

- Privacy Concerns:Generative AI models can be used to generate personal data, raising concerns about privacy and data protection. It is essential to implement robust privacy-preserving techniques to safeguard user data.

- Job Displacement:The automation capabilities of generative AI may lead to job displacement in certain sectors, requiring careful consideration of workforce retraining and economic impact.

Strategies for Addressing Challenges and Mitigating Risks

Addressing the challenges and mitigating the risks associated with generative AI requires a multi-pronged approach.

- Optimize Infrastructure:Investing in specialized hardware, such as GPUs, and optimizing data center infrastructure can significantly enhance performance and efficiency.

- Leverage Cloud Services:Cloud-based AI platforms can provide access to computing resources and data storage on demand, reducing the need for significant upfront investment.

- Data Quality and Diversity:Prioritizing data quality and diversity during training is crucial to mitigate bias and improve model accuracy.

- Transparency and Explainability:Developing transparent and explainable AI models can help understand their decision-making processes and address concerns about bias and fairness.

- Ethical Guidelines and Regulations:Establishing ethical guidelines and regulations for the development and deployment of generative AI is essential to ensure responsible use.

Future Trends and Innovations

The generative AI landscape is rapidly evolving, fueled by advancements in hardware, algorithms, and data availability. This dynamic environment promises a future where AI will play an increasingly significant role in various industries.

Advancements in Hardware and Infrastructure

The continuous development of more powerful and efficient hardware, particularly GPUs, is crucial for training and deploying large language models. NVIDIA’s advancements in GPU architecture, memory bandwidth, and parallel processing capabilities will continue to drive the performance and scalability of generative AI.

Equinix’s data center infrastructure will evolve to meet the growing demand for high-performance computing resources, providing the necessary connectivity, power, and cooling for these demanding workloads.

Emerging Technologies and Trends

- Multimodal Generative AI:Generative AI models will increasingly incorporate multiple modalities, such as text, images, audio, and video, enabling them to create more comprehensive and engaging content. For example, AI systems could generate realistic 3D models from text descriptions or create videos from a sequence of images.

- Explainable AI (XAI):Transparency and interpretability are becoming increasingly important in generative AI, as users demand understanding of how models arrive at their outputs. XAI techniques will enable developers to explain the reasoning behind model decisions, fostering trust and accountability.

- Federated Learning:This approach allows AI models to be trained on decentralized datasets, improving privacy and security while preserving data ownership. This is particularly relevant for generative AI, where data privacy is a major concern.

Evolution of the NVIDIA-Equinix Partnership

The partnership between NVIDIA and Equinix will continue to evolve, focusing on addressing the unique challenges and opportunities presented by generative AI. This includes:

- Optimized Infrastructure:The partnership will focus on developing and deploying optimized infrastructure solutions, such as dedicated AI-powered data centers, that cater to the specific needs of generative AI workloads.

- Enhanced Connectivity:The integration of NVIDIA’s technologies with Equinix’s global data center network will ensure high-bandwidth, low-latency connectivity for AI applications, facilitating real-time data processing and model training.

- Ecosystem Development:NVIDIA and Equinix will work together to foster a thriving ecosystem of AI developers, researchers, and businesses, providing access to resources, tools, and expertise to accelerate the adoption of generative AI.