Nvidia Equinix Generative Ai Stack Data Centers

NVIDIA and Equinix Partner to Unleash Generative AI at Scale: The Future of Intelligent Infrastructure

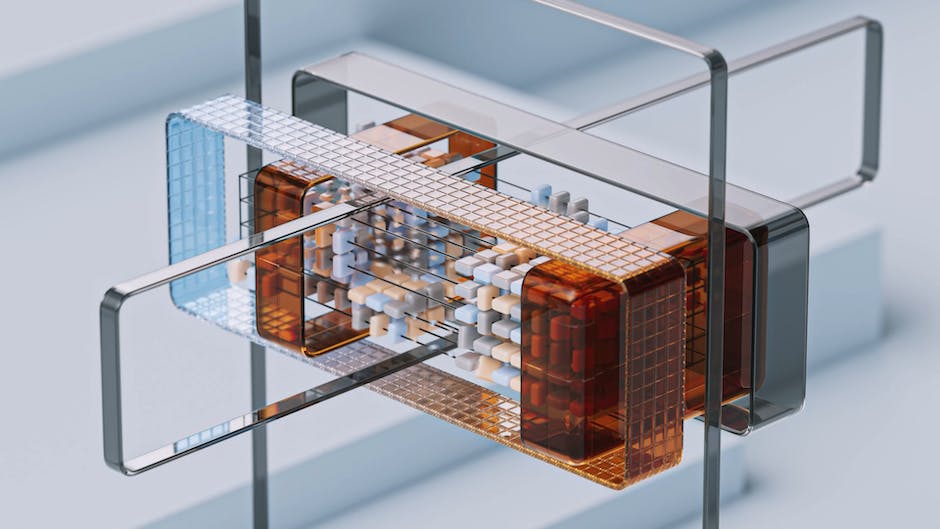

The convergence of NVIDIA’s cutting-edge AI hardware and Equinix’s global, interconnected data center footprint is fundamentally reshaping the landscape of Generative AI deployment. This strategic alliance focuses on building out specialized, high-performance infrastructure designed to meet the immense computational demands of training and inferencing massive AI models. The core of this partnership lies in the creation of optimized data center environments that house NVIDIA’s latest AI accelerators, such as the NVIDIA H100 Tensor Core GPUs, coupled with high-speed networking capabilities and dense power delivery essential for these power-hungry workloads. These "Generative AI stacks" are not just physical locations; they represent a holistic approach to enabling rapid, scalable, and efficient AI development and deployment. Equinix, with its extensive network of International Business Exchange™ (IBX®) data centers strategically located in major digital metros worldwide, provides the foundational infrastructure. NVIDIA, in turn, supplies the specialized compute, networking, and software necessary to power the most complex AI workloads. This collaboration is crucial because Generative AI, whether it’s creating text, images, code, or even new scientific discoveries, requires an unprecedented level of processing power and low-latency access to data. Traditional IT infrastructure often struggles to meet these requirements, leading to bottlenecks and extended development cycles. The NVIDIA-Equinix partnership directly addresses these challenges by providing a purpose-built solution.

At the heart of this initiative is the concept of NVIDIA’s AI Enterprise software suite, which is being optimized and deployed within Equinix’s IBX data centers. This software layer provides a comprehensive framework for developing, deploying, and scaling AI applications. It includes tools, libraries, and frameworks that streamline the AI development lifecycle, from data preparation and model training to deployment and monitoring. By integrating NVIDIA AI Enterprise with Equinix’s robust physical infrastructure, customers gain access to a fully optimized environment. This means reduced complexity in setting up and managing AI infrastructure, faster time-to-market for AI-powered applications, and the ability to scale AI workloads as needed without significant upfront capital investment. The synergy between hardware and software is critical. NVIDIA’s GPUs are designed for parallel processing, making them ideal for the matrix multiplications and tensor operations that are the backbone of deep learning models. Equinix’s role is to provide the secure, resilient, and interconnected environment where these GPUs can operate at peak performance. This includes ensuring sufficient power and cooling, maintaining ultra-low latency through direct peering and interconnectivity options, and offering a flexible footprint that can accommodate the evolving needs of AI workloads. The NVIDIA-Equinix Generative AI stack is therefore a comprehensive solution that bridges the gap between raw compute power and practical, deployable AI applications.

The scale of Generative AI workloads necessitates a new paradigm in data center design and operation. Training large language models (LLMs) like GPT-3 or image generation models like DALL-E can involve trillions of parameters and require exabytes of data. This demands not only immense computational power but also extremely high-bandwidth, low-latency networking to enable efficient communication between thousands of GPUs. Equinix’s global network of IBX data centers offers the ideal platform for this. These data centers are strategically located near major population centers and business hubs, ensuring that AI models can be trained and deployed close to end-users and data sources, minimizing latency. Furthermore, Equinix’s rich ecosystem of network providers, cloud on-ramps, and enterprise customers allows for seamless integration with existing IT environments and facilitates data exchange. The partnership leverages Equinix’s extensive metro footprints to create clusters of NVIDIA DGX systems and other AI-optimized servers. These clusters are interconnected with NVIDIA’s high-speed networking technologies, such as InfiniBand and Ethernet, to achieve unprecedented communication speeds between GPUs. This is vital for distributed training, where a single AI model is trained across multiple servers simultaneously. Without high-speed interconnectivity, these distributed training efforts would be severely hampered by communication bottlenecks, significantly increasing training times.

A key differentiator of the NVIDIA-Equinix Generative AI stack is its emphasis on accessibility and scalability. Instead of individual organizations having to build and maintain their own highly specialized AI infrastructure, which is prohibitively expensive and complex, they can leverage this jointly offered solution. This democratizes access to advanced AI capabilities, allowing startups, enterprises, and research institutions to harness the power of Generative AI without the burden of infrastructure management. Equinix’s Platform Equinix® provides the flexibility for customers to deploy their AI workloads in a co-location model, either by bringing their own NVIDIA hardware or by utilizing pre-configured NVIDIA solutions offered through Equinix. This flexibility is crucial in the rapidly evolving AI landscape, where hardware requirements can change quickly. The ability to scale compute resources up or down based on demand is also a significant advantage. This pay-as-you-go or subscription-based model, facilitated by Equinix’s flexible colocation and interconnection services, allows businesses to optimize their AI spending and avoid over-provisioning. The partnership is therefore not just about providing hardware and space; it’s about offering a complete, managed solution that simplifies the adoption and scaling of Generative AI.

The operational efficiency and cost-effectiveness of this integrated approach are paramount. Building and managing the complex infrastructure required for large-scale Generative AI is a significant undertaking. Equinix’s expertise in data center operations, including power management, cooling, security, and network connectivity, ensures that the NVIDIA AI stacks are running optimally and reliably. This allows customers to focus on developing and deploying their AI models rather than worrying about the underlying infrastructure. Furthermore, by pooling resources and leveraging economies of scale within Equinix’s global data center network, organizations can achieve cost savings compared to building and operating their own dedicated AI facilities. The proximity of these AI stacks to major network exchange points also reduces data transit costs and improves application performance. The ability to securely connect to these AI resources from anywhere in the world via Equinix’s extensive interconnection fabric is a critical enabler for distributed teams and global operations. The optimization extends to the software side as well, with NVIDIA AI Enterprise providing tools for efficient model deployment and management, further reducing operational overhead.

The security and compliance aspects of Generative AI are also addressed by this partnership. Equinix’s IBX data centers adhere to stringent security standards and compliance certifications, providing a secure environment for sensitive AI data and models. This is particularly important for industries such as finance, healthcare, and government, where data privacy and regulatory compliance are critical. The ability to deploy AI workloads in a secure, trusted environment within Equinix’s data centers offers peace of mind to organizations handling proprietary data or developing AI applications that require strict regulatory adherence. The controlled access and physical security measures at Equinix facilities, combined with NVIDIA’s security features within its AI Enterprise software, create a robust security posture for Generative AI deployments. This is essential as AI models become more integrated into critical business processes and handle increasingly sensitive information.

The future of Generative AI is intrinsically linked to the availability of scalable, high-performance, and interconnected infrastructure. The NVIDIA-Equinix partnership is a testament to this reality. By combining NVIDIA’s leadership in AI hardware and software with Equinix’s unparalleled global data center footprint and interconnection capabilities, they are creating the foundational elements for the next wave of AI innovation. This collaboration is enabling enterprises to accelerate their AI initiatives, unlock new business opportunities, and drive transformative change across industries. The concept of purpose-built AI data centers, optimized for the unique demands of Generative AI, is no longer a distant prospect but a present reality, thanks to this powerful alliance. As Generative AI models continue to grow in complexity and sophistication, the need for robust and scalable infrastructure will only increase. The NVIDIA-Equinix Generative AI stack provides a clear path forward for organizations looking to harness the full potential of AI, ensuring they have the computational power, network connectivity, and operational support to succeed in the age of intelligent machines. The ongoing evolution of this partnership will undoubtedly continue to shape the future of AI infrastructure, driving innovation and expanding the possibilities of what Generative AI can achieve.