The Evolution of Retrieval-Augmented Generation Strategies for Navigating the Era of Long-Context Large Language Models

The landscape of natural intelligence and machine learning is currently undergoing a fundamental transformation as large language models (LLMs) transition from restricted context windows to expansive, million-token capacities. For the better part of the last three years, the industry standard for Retrieval-Augmented Generation (RAG) was dictated by technical limitations; developers were forced to fragment documents into minute "chunks," convert them into vector embeddings, and retrieve only the most relevant snippets to fit within narrow windows of 4,000 to 32,000 tokens. However, with the emergence of next-generation models such as Google’s Gemini 1.5 Pro and Anthropic’s Claude 3 series, the paradigm has shifted toward long-context windows capable of processing entire libraries of information in a single prompt. While these advancements promise to revolutionize how enterprises interact with their data, they have simultaneously introduced new complexities regarding computational costs, latency, and a phenomenon known as "attention degradation."

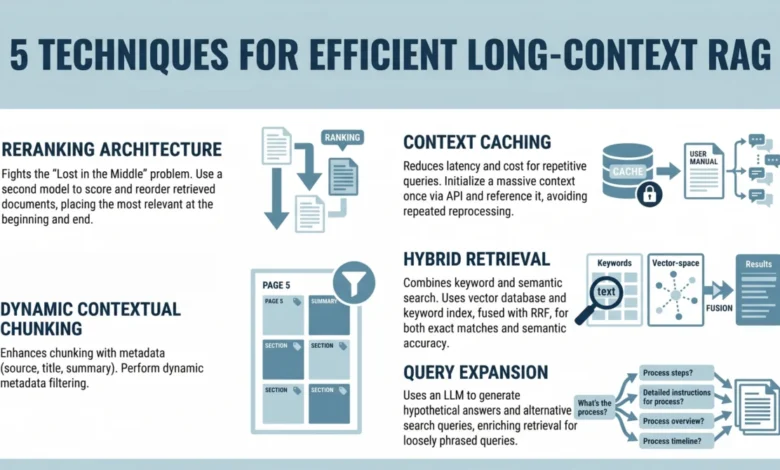

To maintain the accuracy and efficiency of these systems, a new set of architectural strategies has emerged. These techniques move beyond simple data partitioning, focusing instead on optimizing how models prioritize information and how developers manage the financial overhead of high-token processing. The shift from "short-context RAG" to "long-context RAG" requires a sophisticated understanding of reranking, context caching, metadata filtering, hybrid retrieval, and query expansion.

The Chronology of Context Evolution and the "Lost in the Middle" Crisis

The history of LLM development can be categorized by the rapid expansion of the context window. In 2022, OpenAI’s GPT-3.5 offered a 4,000-token window, necessitating aggressive RAG pipelines to provide models with external knowledge. By mid-2023, context windows expanded to 100,000 tokens with the release of Claude 2. By early 2024, Google DeepMind shattered previous records by introducing a 1-million-token window, later expanding to 2 million for specific developer previews.

Despite this expansion, researchers from Stanford University and UC Berkeley identified a critical flaw in LLM performance during a landmark 2023 study. The study revealed that as context windows grew longer, the models’ ability to retrieve specific information followed a "U-shaped" curve. Performance was highest when relevant data was placed at the very beginning or the very end of the prompt. However, when the "needle" of information was placed in the "middle" of the "haystack," performance plummeted. This "Lost in the Middle" phenomenon proved that simply increasing context size does not equate to increased intelligence; without strategic intervention, long-context models often fail to synthesize information buried in the center of a large dataset.

1. Strategic Reranking Architectures

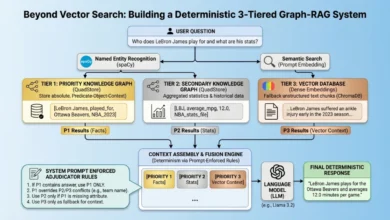

To combat attention loss, the first essential technique in modern RAG is the implementation of a reranking step. In a traditional vector search, a system retrieves the top "K" documents based on mathematical similarity (distance in vector space). While efficient, vector similarity does not always correlate with semantic relevance or the specific nuances of a user’s query.

A reranking architecture introduces a second, more powerful model—often a Cross-Encoder—that evaluates the initial list of retrieved documents. This model performs a deeper analysis of the relationship between the query and each document. Once the documents are reranked by relevance, the developer can strategically place the highest-scoring documents at the beginning and end of the LLM prompt. By ensuring that the most critical data occupies the "high-attention zones" of the model’s context window, developers can effectively bypass the "Lost in the Middle" limitation. This two-stage process (retrieval followed by reranking) balances the speed of vector search with the precision of deep semantic analysis.

2. The Economic Necessity of Context Caching

As context windows expand to 1 million tokens and beyond, the financial implications for enterprises become significant. Processing a million tokens for every single user query is not only slow—often resulting in latencies of 30 to 60 seconds—but also prohibitively expensive. For a high-traffic application, the cost of repeatedly "reading" the same massive knowledge base can quickly escalate into thousands of dollars per day.

Context caching has emerged as a vital solution for reducing both latency and cost. This technique allows developers to "prefix" or initialize a large block of information—such as a company’s entire legal archive or a technical documentation library—and store its processed state on the provider’s servers. When a user asks a question, the model does not re-process the entire archive; it simply refers to the cached state and processes only the new query.

Industry data suggests that context caching can reduce costs by up to 90% for repetitive queries and decrease time-to-first-token (TTFT) by over 80%. Major providers like Anthropic and Google have already begun offering tiered pricing for cached tokens, signaling that this will be a standard feature for enterprise-grade AI deployments in the coming years.

3. Precision Through Dynamic Contextual Chunking and Metadata

While long-context models can "see" more data, they are still susceptible to "noise." If a prompt is filled with 500,000 tokens of irrelevant information, the model’s reasoning capabilities can be diluted. To address this, developers are moving toward dynamic contextual chunking.

Unlike traditional chunking, which splits text at arbitrary character counts (e.g., every 500 characters), contextual chunking uses structural markers—such as headers, chapters, or semantic shifts—to ensure that each piece of data retains its meaning. Furthermore, by enriching these chunks with metadata filters (such as timestamps, author tags, or document categories), developers can use structured SQL-like queries to pre-filter the data before it even reaches the LLM. For example, a financial analyst might filter a 10-year archive to only include "Q4 earnings reports from 2021-2023," drastically reducing the amount of irrelevant data the model must navigate.

4. Hybrid Retrieval: Merging Semantic and Lexical Search

One of the most persistent challenges in RAG is the "exact match" problem. Vector search is excellent at understanding that "feline" is related to "cat," but it can struggle with highly specific technical terms, product IDs, or rare medical codes. In these instances, traditional keyword-based search (BM25) remains superior.

Modern RAG systems now employ hybrid retrieval, which combines the strengths of both worlds. By running a semantic vector search and a keyword-based search in parallel, and then merging the results using algorithms like Reciprocal Rank Fusion (RRF), systems can achieve a higher degree of accuracy. This ensures that the system is both "smart" enough to understand intent and "precise" enough to find a specific serial number buried in a 5,000-page manual.

5. Query Expansion and the Summarize-Then-Retrieve Framework

The final frontier in optimizing long-context systems is addressing the "vocabulary gap" between users and documents. Users often phrase questions poorly or use informal language that does not match the formal tone of professional documentation.

Query expansion techniques use a lightweight, inexpensive LLM to generate multiple versions of a user’s query or even a "hypothetical" answer (a technique known as HyDE). For instance, if a user asks, "What do I do if the fire alarm goes off?", the system might generate hypothetical queries like "Emergency evacuation procedures" or "Fire safety protocols." By searching for these expanded terms, the system is much more likely to find the relevant section in a dense corporate handbook. This "Summarize-Then-Retrieve" approach acts as a bridge, translating human intent into the specific terminology used in the underlying data.

Implications and Future Outlook

The transition to long-context RAG represents more than just a technical upgrade; it is a shift in how organizations think about their "institutional memory." We are moving away from a world where AI systems have "short-term memory loss" and toward a world where an AI can maintain a persistent, deep understanding of an entire organization’s history.

However, the "brute force" approach of simply stuffing 1 million tokens into a prompt is rarely the optimal solution. The most successful implementations in 2024 and beyond will be those that use RAG as a sophisticated filtering layer. By combining the precision of reranking and hybrid search with the efficiency of context caching, developers can create AI agents that are not only knowledgeable but also economically viable and responsive.

As LLM providers continue to push the boundaries of context length, the focus of the developer community will likely shift from "how much data can we fit?" to "how can we ensure the model focuses on exactly what matters?" The integration of these five techniques—reranking, caching, metadata filtering, hybrid search, and query expansion—marks the beginning of a more mature, refined era of artificial intelligence where context is no longer a limitation, but a carefully managed resource.