Leaders Banning Ai Generated Code

The Great Code Purge: Why Leaders Are Banning AI-Generated Code

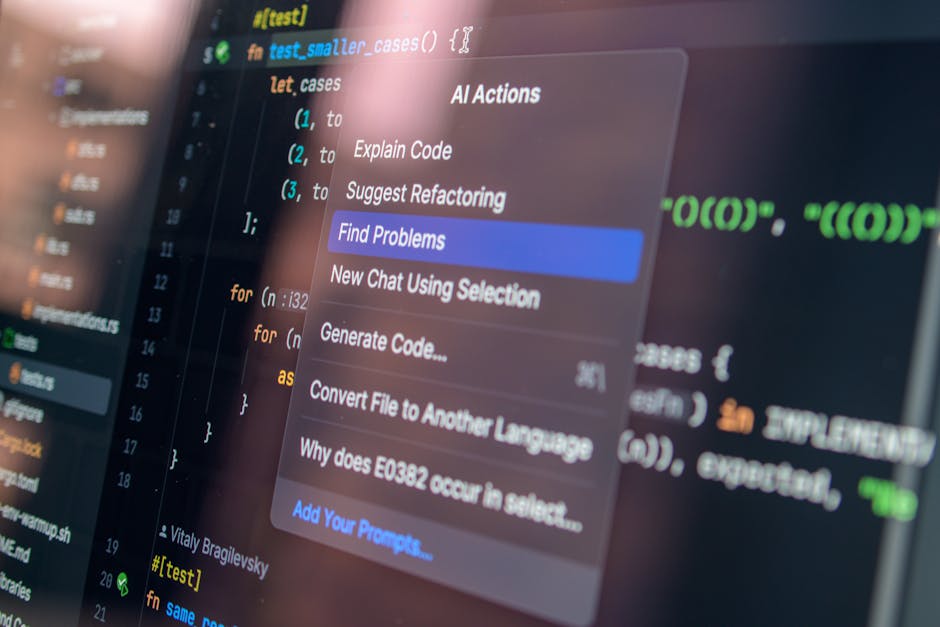

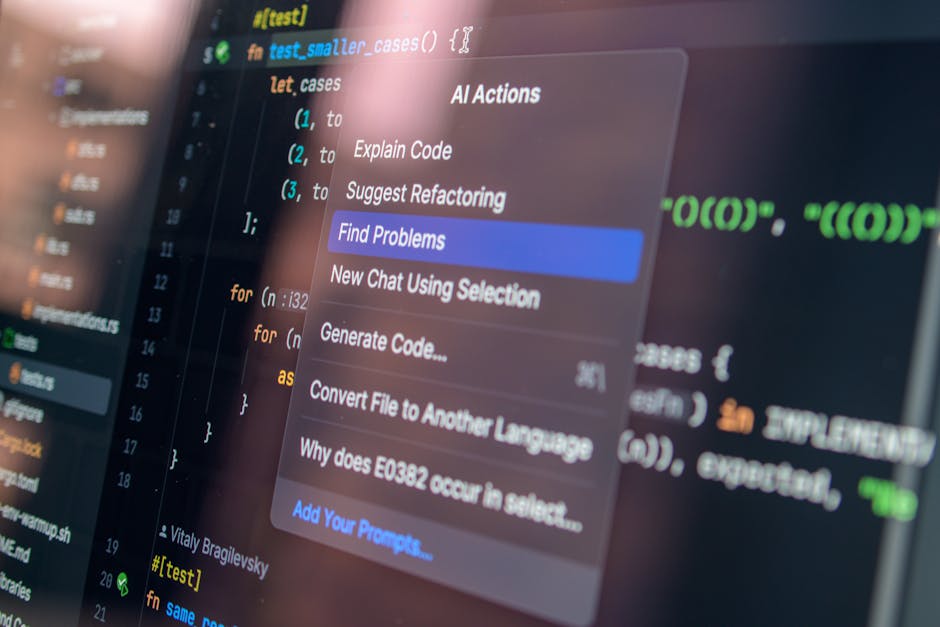

The burgeoning landscape of artificial intelligence has undeniably revolutionized many industries, and software development is no exception. AI-powered coding assistants, capable of generating snippets, functions, and even entire programs with remarkable speed and accuracy, have become increasingly sophisticated. However, a growing counter-movement is emerging: leaders across various organizations are implementing explicit bans on the use of AI-generated code. This decision is not a Luddite rejection of progress, but rather a strategic and calculated response to a complex set of risks that far outweigh the immediate perceived benefits. Understanding the motivations behind these bans requires a deep dive into security vulnerabilities, intellectual property concerns, the erosion of critical thinking, the challenge of maintaining code quality and maintainability, and the ethical implications of relying on opaque AI systems for core development tasks.

One of the most significant drivers behind these bans is the inherent security risk associated with AI-generated code. While AI models are trained on vast datasets of existing code, this training data is not always sanitized for vulnerabilities. This means that AI can, and frequently does, generate code that contains security flaws, backdoors, or exploits that are not immediately apparent. Developers, often under pressure to deliver quickly, might integrate AI-generated code without performing the rigorous security reviews that would be standard for human-written code. This can create a false sense of security, leaving systems susceptible to breaches and data loss. The consequences of such vulnerabilities can be catastrophic, leading to financial losses, reputational damage, and legal liabilities. Furthermore, the "black box" nature of many AI models makes it difficult to trace the origin of a vulnerability. If a security issue is discovered, identifying the specific AI-generated component responsible and rectifying it can be a complex and time-consuming process, especially if the original model’s output is no longer readily available or reproducible. This lack of transparency and traceability exacerbates the security challenge, making proactive prevention and rapid incident response significantly harder. Consequently, many organizations are opting for the more predictable and controllable environment of human-written and reviewed code to mitigate these risks.

Intellectual property (IP) rights represent another formidable hurdle for the widespread adoption of AI-generated code. The legal frameworks surrounding AI-generated content are still in their nascent stages, and questions of ownership, licensing, and copyright are far from settled. When an AI model generates code, who owns that code? Is it the company that developed the AI, the user who prompted it, or does it belong to the public domain? This ambiguity creates significant legal and commercial risks. Companies investing heavily in proprietary software may find themselves inadvertently incorporating code that infringes on existing copyrights or that they cannot legally claim as their own. The implications for software licensing, patent applications, and competitive advantage are profound. For instance, if a competitor uses an AI model trained on a company’s proprietary code to generate new features, it could lead to a complex web of IP disputes. Furthermore, the use of AI models trained on open-source code introduces its own set of licensing complexities. Without clear attribution and adherence to the original licenses, organizations risk violating open-source terms, leading to legal action and the potential forced open-sourcing of their own intellectual property. To avoid these intricate legal battles and to maintain clear ownership and control over their valuable software assets, many leaders are drawing a hard line against the integration of AI-generated code.

Beyond security and IP, a more fundamental concern is the potential erosion of critical thinking and problem-solving skills within development teams. The allure of AI is its ability to automate complex tasks. However, in software development, the process of writing code is intrinsically linked to understanding the underlying logic, debugging issues, and designing elegant solutions. Relying heavily on AI to generate code can lead to a generation of developers who are proficient at prompting an AI but lack the deep conceptual understanding and analytical abilities required for truly innovative and robust software engineering. This can manifest as an inability to effectively debug non-trivial issues, a lack of creativity in designing novel algorithms, and a diminished capacity for understanding the broader system architecture. When developers don’t grapple with the intricacies of code creation themselves, their ability to identify subtle bugs, optimize performance, or even effectively challenge and improve upon AI-generated suggestions diminishes. This dependency can stunt professional growth and ultimately limit the long-term innovation potential of a company. Leaders recognize that while AI can be a powerful tool, it should augment, not replace, the essential human cognitive processes that drive true software engineering excellence. The intellectual muscle developed through manual coding is a critical asset that is at risk of atrophy.

Maintaining code quality and ensuring long-term maintainability are also significant concerns that contribute to the bans. AI-generated code, while often syntactically correct, can suffer from a lack of contextual understanding, architectural coherence, and adherence to established coding standards and best practices. AI models may generate code that is overly verbose, inefficient, difficult to read, or that introduces technical debt. Unlike human developers who can reason about the long-term implications of their code, AI typically focuses on generating a functional output based on its training data. This can lead to code that is a patchwork of different styles and approaches, making it a nightmare for future developers to understand, refactor, or extend. The cost of maintaining such code can escalate significantly over time, impacting development velocity and increasing the risk of introducing new errors. Furthermore, AI might not always adopt the specific architectural patterns or design principles that are crucial for a particular project’s scalability and stability. This lack of a holistic, architectural perspective can lead to systems that are brittle and difficult to evolve. Leaders prioritizing the longevity and cost-effectiveness of their software investments are thus wary of introducing code that will inevitably increase maintenance burdens.

The ethical considerations surrounding the use of AI-generated code are also coming under increased scrutiny. The opacity of AI decision-making processes raises questions about accountability and bias. If an AI generates biased or discriminatory code, who is responsible? The developers who used it, the creators of the AI, or the AI itself? The potential for AI to perpetuate and even amplify existing societal biases embedded in its training data is a serious ethical concern, particularly in applications that impact user data, decision-making, or access to services. Furthermore, the increasing reliance on AI for core development tasks can lead to job displacement or a shift in the required skillsets for software engineers, raising broader societal and economic questions. Leaders are increasingly aware of their ethical obligations to their employees, customers, and society at large. The potential for AI-generated code to introduce unforeseen ethical dilemmas, coupled with the difficulty of assigning blame and ensuring fairness, is a compelling reason for many to enforce strict limitations on its use. The principle of human oversight and accountability is paramount when dealing with code that has real-world consequences.

The decision by many leaders to ban AI-generated code is a pragmatic and forward-thinking response to a multifaceted set of challenges. While the productivity gains offered by AI coding assistants are tempting, the long-term risks to security, intellectual property, developer skills, code quality, and ethical integrity are too substantial to ignore. This trend signifies a mature understanding that technology, particularly nascent AI, requires careful integration and rigorous human oversight. The focus is shifting from simply generating code quickly to ensuring that the code generated is secure, legally sound, maintainable, and ethically responsible. This “great code purge” is not an end to AI in development, but rather a call for a more discerning and responsible approach, prioritizing human expertise and control in the critical task of software creation. The emphasis on human-centric development practices, augmented by AI tools used judiciously and with stringent validation, is emerging as the more sustainable and trustworthy path forward. Organizations that embrace this philosophy are better positioned to build robust, secure, and ethically sound software systems in the long run. The future of coding lies not in blind automation, but in a harmonious and carefully managed partnership between human ingenuity and artificial intelligence.