Meta Introduces Enhanced Age Verification Amidst New Mexico Legal Showdown and Threat of Platform Withdrawal

Amidst the escalating second phase of a high-profile child safety trial in New Mexico, technology giant Meta has announced a series of new measures aimed at strengthening age-related protections for teenagers across its sprawling platforms. These initiatives, detailed in a company blog post, arrive at a critical juncture, as Meta faces significant legal and financial penalties and has even threatened to withdraw its services from New Mexico in response to the state’s stringent demands. The ongoing legal battle underscores the intensifying scrutiny social media companies face regarding their impact on young users and the efficacy of their safety protocols.

Meta’s Proactive Steps: Bolstering Age Protections

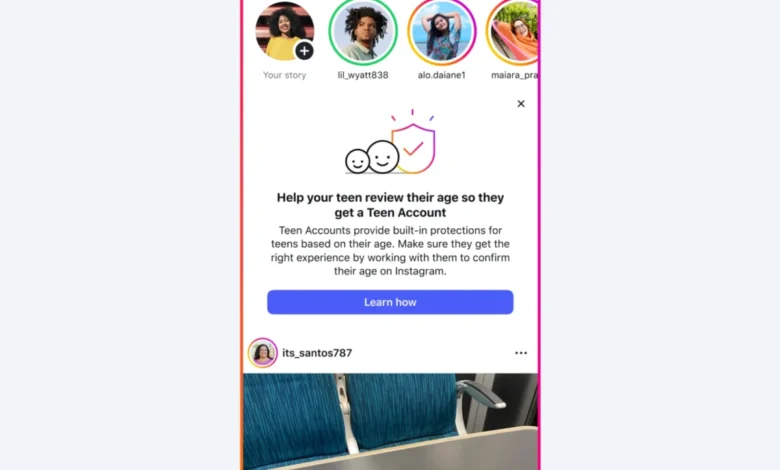

On Tuesday, Meta unveiled a multi-pronged approach designed to enhance age verification and parental oversight on its platforms, specifically Facebook and Instagram. A key component of this strategy is the rollout of a notification system for parents in the United States. All users identified by Meta as parents – extending beyond just adults supervising a Teen Account – will receive detailed information on how to verify and confirm their teens’ ages within the company’s applications. This notification will include a direct link to a blog post Meta published approximately a year prior, which offers guidance on how to engage with teenagers about the critical importance of providing accurate age information online. The company aims to leverage these notifications to elevate parental awareness and encourage active participation in ensuring their children’s declared ages align with reality on its platforms.

In parallel with these parental outreach efforts, Meta is significantly expanding the reach of its advanced age-detection technology. This AI-driven system, which initially saw a limited rollout, will now be implemented in 27 countries across the European Union, Brazil, and, for the first time, to Facebook users in the United States. This expansion marks a substantial step in Meta’s commitment to utilizing artificial intelligence to enforce age-appropriate experiences globally. The technology, which began identifying teen users who had listed an adult age in their accounts in April of the previous year, is designed to re-assign these users to Meta’s "Teen Account" product. The company asserts that these specialized accounts incorporate more stringent safety protections tailored for younger demographics.

Further refinements to this AI technology were also announced. Meta stated that its AI would begin to analyze user profiles for "contextual clues" regarding their age. This sophisticated approach moves beyond self-declared ages, attempting to infer a user’s true age from various data points and behavioral patterns on the platform. Additionally, Meta is simplifying the process for users to report suspected underage accounts, empowering the community to contribute to safety enforcement. The company is also strengthening its capabilities to prevent underage users from successfully opening new accounts, a perennial challenge for platforms striving to comply with age restrictions.

The Evolving Landscape of Age Verification and Its Challenges

The deployment and enhancement of age-detection technology by Meta reflect a broader industry trend and a response to persistent calls from regulators and child safety advocates. For years, the accuracy of age verification on social media platforms has been a contentious issue. Critics argue that self-declaration of age is easily circumvented by minors, leaving them exposed to content and interactions intended for adults. Meta, in its blog post, reiterated its long-standing position that lawmakers should mandate app stores to verify user ages and subsequently provide that verified information to apps and developers. This proposal shifts a significant portion of the age-verification burden from individual platforms to the foundational distribution channels, potentially creating a more robust and standardized system across the digital ecosystem.

However, the effectiveness of Meta’s existing "Teen Account" product and its AI age-detection technology has not been without controversy. In the fall, independent experts who conducted tests on Teen Accounts published a critical report, alleging that the product did not function as advertised. Among their concerning findings, researchers documented multiple instances where the intended safety guardrails failed to prevent inappropriate contact between minors and strangers. This report highlighted the complex challenges inherent in designing and implementing truly effective online safety mechanisms, especially when dealing with determined users and the sophisticated methods employed by those seeking to exploit vulnerabilities. The latest enhancements to Meta’s AI and reporting mechanisms appear to be a direct response to such criticisms, attempting to plug documented gaps and improve overall system integrity.

The New Mexico Legal Battle: A High-Stakes Confrontation

Meta’s latest safety announcements are inextricably linked to the intense legal pressures it faces, particularly the ongoing child safety trial in New Mexico. This case represents a significant challenge to the company’s operational practices and its public image. The first phase of the New Mexico trial concluded in March, with a jury finding Meta liable for misleading consumers about the safety of its platforms and, critically, for endangering children. The lawsuit, initiated by the state’s attorney general, resulted in a substantial financial penalty: Meta was ordered to pay the maximum penalties for each violation of New Mexico’s consumer protection laws, amounting to a staggering $375 million. The company has publicly stated its intention to appeal this decision, signaling its firm belief in its legal standing and the robustness of its existing safety measures.

The current phase of the trial is a bench trial, where New Mexico’s Department of Justice is seeking injunctive relief. This means the state is not only pursuing additional damages but also demanding specific, court-ordered changes to Meta’s platforms. The state is seeking an additional $3.75 billion in damages, pushing the potential financial burden to an unprecedented level. More profoundly, the proposed policies by the New Mexico Department of Justice include several sweeping requirements:

- The implementation of effective age verification systems.

- The complete blocking of children under 13 from accessing the platforms.

- Imposing limits on end-to-end messaging encryption for minors.

- Permanent bans for adult users who engage in or facilitate child exploitation.

These demands, if granted, would necessitate significant technological and operational overhauls for Meta, potentially setting a far-reaching precedent for how social media companies operate in the United States.

Meta’s "Nuclear Option": Threatening to Withdraw Services

In a dramatic development last week, Meta escalated its defense by threatening to shut down its platforms in New Mexico in response to the state’s demands. In a court filing, the company argued, "Many of the requests are technologically or practically infeasible and would essentially force Meta to build entirely separate apps for use only in New Mexico." The filing, reported by The Guardian, concluded with a stark warning: "Therefore, granting onerous relief could compel Meta to entirely withdraw Facebook, Instagram and WhatsApp from the state as the only feasible means of compliance."

This "nuclear option" was reiterated in court on Monday by Meta’s counsel, Alex Parkinson, who asserted that granting the state’s injunctive relief in full would "genuinely make it untenable to continue offering Meta’s products" in New Mexico. The company’s argument hinges on the impracticality of creating state-specific versions of its globally integrated platforms, citing the immense technical challenges and potential costs involved. Such a move would be unprecedented in the U.S. and would represent a significant disruption for millions of users in New Mexico who rely on these platforms for communication, commerce, and social connection.

New Mexico Attorney General Raul Torrez swiftly countered Meta’s threat, accusing the company of prioritizing advertising revenue and profit over the fundamental safety of children. In a pointed statement, Torrez declared, "We know Meta has the ability to make these changes. This is not about technological capability." His remarks suggest that the state views Meta’s resistance as a corporate choice rather than a genuine technical limitation, implying that the company possesses the resources and expertise to implement the requested changes if it chose to do so. This stark disagreement highlights the fundamental philosophical divide between the tech giant and the state regulators.

Broader Context: Industry Scrutiny and Regulatory Pressure

The New Mexico trial is not an isolated incident but rather a microcosm of a much larger, global trend of increasing governmental and public scrutiny over social media’s impact on youth. Across the United States and internationally, lawmakers are grappling with how to regulate digital platforms to protect minors from online harms, including cyberbullying, exploitation, and mental health issues. Legislation such as the Kids Online Safety Act (KOSA) in the U.S., the Digital Services Act (DSA) in the European Union, and the Online Safety Act in the UK, all aim to impose greater responsibilities on tech companies to safeguard young users.

Numerous studies and reports have highlighted the pervasive nature of online risks for minors. Organizations like Common Sense Media and the Pew Research Center have consistently documented the high rates of social media usage among teenagers and the corresponding exposure to potentially harmful content or interactions. For instance, data frequently shows that a significant percentage of teens encounter hate speech, harassment, or explicit material online. These findings fuel the legislative drive for stronger protections and stricter enforcement.

The legal challenges faced by Meta extend beyond New Mexico. The company is involved in multiple lawsuits across various states and jurisdictions, often facing accusations of designing addictive platforms, failing to protect children, and contributing to a youth mental health crisis. The outcome of the New Mexico case could set a powerful precedent, potentially emboldening other states or federal bodies to pursue similar legal avenues and demand more stringent platform modifications.

Implications and Future Outlook

The New Mexico child safety trial and Meta’s response carry profound implications for the future of online regulation and the operation of social media platforms. The $375 million verdict in Phase 1 already sends a strong message about corporate accountability. If the state succeeds in securing the $3.75 billion in additional damages and the sweeping injunctive relief sought in Phase 2, it would represent an unprecedented victory for child safety advocates and a significant legal and financial blow to Meta.

Meta’s threat to withdraw its services from New Mexico, while potentially a negotiation tactic, is a serious proposition. Should Meta follow through, it would create a digital void for millions of users and could prompt a heated debate about the balance between state regulatory power and corporate operational autonomy. Such a move could also open the door for competing platforms to gain market share in the state, or it could simply underscore the critical reliance modern society has on these digital infrastructures.

The ongoing tension between technological capability and corporate willingness, as articulated by AG Torrez, lies at the heart of this dispute. While Meta argues for the infeasibility of state-specific apps, critics point to the company’s vast resources and engineering prowess, suggesting that a solution is attainable if the priority is shifted from profit margins to child safety. The debate also highlights the inherent difficulties in enforcing age verification in an open internet environment, where determined individuals can always find ways around restrictions.

Ultimately, the New Mexico trial is a landmark case that will likely influence future legislative and judicial actions concerning child online safety. It forces a critical examination of how social media companies balance innovation and user engagement with their ethical and legal responsibilities to protect their most vulnerable users. The outcome will not only shape Meta’s operational strategies but also contribute significantly to the evolving framework of digital governance in an increasingly interconnected world.