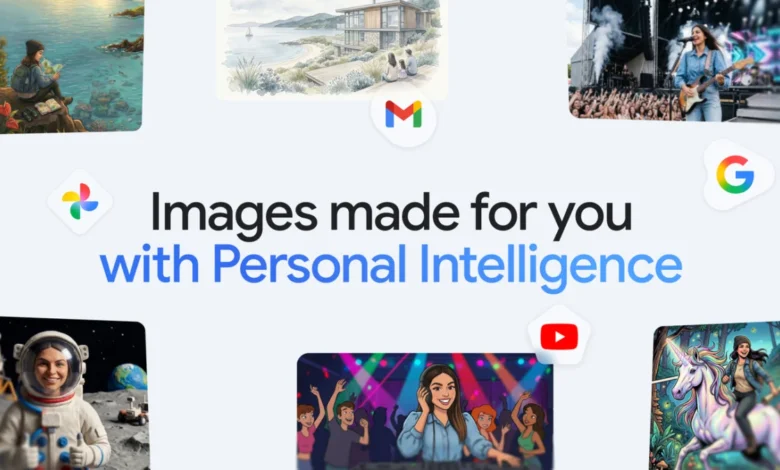

New ways to create personalized images in the Gemini app

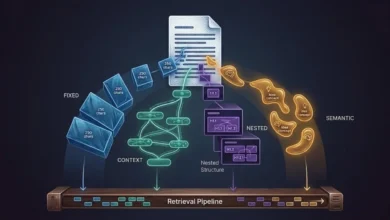

The core of this update is the ability for Gemini to understand personal context without the need for manual uploads or exhaustive descriptions. Traditionally, users seeking to generate an image of themselves or their families in specific scenarios had to provide detailed physical descriptions or upload reference photos to guide the AI. With the new integration, Gemini can now access labeled groups in Google Photos—such as "Me," "Mom," or "Spot"—to automatically populate AI-generated scenes with the correct likenesses. This streamlined workflow is designed to reduce the "prompt friction" that has often hindered mainstream adoption of generative AI tools.

The Evolution of Personal Intelligence and Nano Banana 2

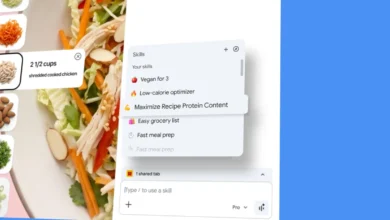

At the heart of these new capabilities is "Personal Intelligence," a framework Google is deploying to make AI interactions feel tailored to the individual. Unlike generic LLMs (Large Language Models) that draw exclusively from broad datasets of public information, Personal Intelligence layers a user’s specific interests and digital history onto the generative process. This is facilitated by the Nano Banana 2 model, which appears to be a specialized multimodal generation engine optimized for speed and personal context integration.

By connecting to Google apps like Workspace and Photos, Gemini gains a "grounded" understanding of the user. For instance, if a user asks Gemini to "design my dream house," the AI no longer produces a random architectural render. Instead, it analyzes the user’s past interactions, saved preferences, and even visual cues from their photo history to generate a result that aligns with their specific aesthetic tastes and lifestyle requirements. This automation of context allows for much shorter prompts, such as "create a picture of my desert island essentials," where the AI fills in the blanks based on the user’s actual habits and favorite items.

Integrating the Google Photos Ecosystem

The integration with Google Photos is perhaps the most transformative aspect of this rollout. Google Photos, which currently hosts billions of images for over one billion users worldwide, already features sophisticated facial recognition and organization tools. Users who have already utilized the "People & Pets" feature to label individuals in their library will find that Gemini can seamlessly transition those labels into generative prompts.

For example, a user can now request, "create a claymation image of me and my family enjoying our favorite activity." Gemini will identify the individuals labeled as "family" in the user’s Photos library, recognize the "favorite activity" from contextual data or previous prompts, and render a stylized image featuring recognizable likenesses. This capability extends to various artistic styles, including watercolors, charcoal sketches, and oil paintings, allowing users to transform their real-life memories into diverse artistic interpretations without the technical hurdle of manual photo editing or complex prompt engineering.

User Control, Transparency, and the Refinement Process

Recognizing that AI-generated content often requires iterative adjustments, Google has introduced several tools to keep the creative control in the hands of the user. If the initial image generated by Gemini does not perfectly match the user’s vision, they can provide conversational feedback to correct specific details. Additionally, a new "+" icon allows users to manually select a different reference photo from their Google Photos library to provide a new perspective or a more accurate likeness for the AI to follow.

Transparency is also a focal point of this update. A new "Sources" button has been integrated into the image generation interface. When clicked, this tool reveals which specific images from the user’s Google Photos library were auto-selected to guide the creation. Users can even ask Gemini directly for information regarding the attribution and the specific data sources used for a particular render, ensuring a clearer understanding of how the AI is interpreting their personal data.

Chronology of Google’s Generative AI Milestones

The introduction of personalized image generation is the latest step in a rapid timeline of AI development at Google. To understand the significance of this update, it is essential to look at the trajectory of the Gemini project over the past two years:

- February 2023: Google introduces "Bard," its initial conversational AI, in response to the rise of ChatGPT.

- December 2023: Google announces the Gemini era, introducing Gemini Pro and Ultra models, signaling a shift toward true multimodality.

- February 2024: Google rebrands Bard to Gemini and launches a dedicated mobile app. Image generation is briefly introduced but then paused following controversies regarding historical inaccuracies in generated depictions.

- May 2024: At Google I/O, the company emphasizes "AI Agents" and the integration of Gemini across the entire Google ecosystem, including Workspace and Photos.

- Late 2024: The rollout of the personalized image experience begins, marking the return and evolution of Gemini’s visual capabilities with a focus on personal relevance and accuracy.

Data and Market Context

The move toward personalized AI comes at a time when the generative AI market is experiencing explosive growth. According to data from Bloomberg Intelligence, the generative AI market is poised to grow to $1.3 trillion by 2032. Google’s strategy involves leveraging its massive install base—specifically the 100 million-plus subscribers to Google One—to create a "moat" that generic AI providers cannot easily replicate.

By tying AI capabilities to the user’s existing digital life (emails, photos, and documents), Google creates a high-utility environment. Industry analysts suggest that "Personal Intelligence" is the key to moving AI from a novelty to a daily productivity tool. While competitors like OpenAI and Midjourney offer powerful generic generation, Google’s advantage lies in its "contextual graph," which allows the AI to know who the user is and what they care about.

Privacy and Security Frameworks

The integration of private photo libraries into an AI model naturally raises significant privacy concerns. In response, Google has reiterated its core privacy commitments. The company has explicitly stated that the Gemini app does not directly train its core foundational models on a user’s private Google Photos library. Instead, the system uses a limited set of information—such as specific prompts and model responses—to improve functionality without exposing private imagery to the broader training set.

Furthermore, the connection between Google Photos and Gemini is an opt-in experience. Users must manually authorize the link between their library and the AI, and this access can be revoked at any time through the app’s settings. This "privacy-by-design" approach is intended to reassure users that their personal memories remain secure while still benefiting from the convenience of AI-assisted creativity.

Broader Implications for the AI Industry

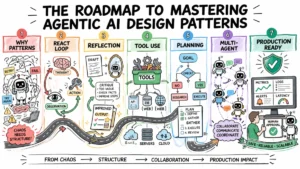

The rollout of these features has significant implications for the broader technology landscape. First, it signals the arrival of the "AI Agent" era, where software no longer just executes commands but understands context and anticipates needs. Second, it sets a new standard for image generation, moving the industry toward "identity-consistent" AI, where the software can maintain the same face or style across multiple different generations.

The initial rollout is limited to Google AI Plus, Pro, and Ultra subscribers in the United States, suggesting a tiered monetization strategy where the most advanced "personal" features are reserved for paying members. However, Google has confirmed plans to bring these capabilities to Gemini on Chrome desktops and to a wider global audience in the near future.

As AI becomes more integrated into personal data, the boundary between a search tool and a personal assistant continues to blur. Google’s latest update to Gemini suggests a future where AI is not just a window into the world’s information, but a mirror reflecting the user’s own life and creativity. The success of this initiative will likely depend on how well Google balances the undeniable convenience of Personal Intelligence with the stringent privacy demands of its global user base.