The Strategic Imperative of Document Chunking in Enterprise Retrieval-Augmented Generation Systems

The deployment of internal knowledge bases powered by Large Language Models (LLMs) has become a cornerstone of digital transformation in the enterprise sector. However, recent technical audits of these systems reveal a recurring and critical failure point that often eludes initial testing: the strategy used to "chunk" or segment data. In one documented instance within a regulated corporate environment, a compliance officer queried an internal AI system regarding contractor onboarding processes. The system provided a confident, well-structured response that correctly outlined general procedures but entirely omitted a vital exception clause for regulated projects. Investigations into the system logs revealed that while the source document contained the exception, the retrieval mechanism had failed to surface it. The error was traced back to a mechanical split in the data pipeline, where a paragraph boundary had severed the general rule from its qualifying exception, rendering the latter invisible to the AI’s retrieval logic.

This incident underscores a fundamental reality of Retrieval-Augmented Generation (RAG) architectures: a system does not retrieve documents; it retrieves chunks. The efficacy of an enterprise AI is entirely dependent on the shape and semantic integrity of these fragments. When a chunk is too large, the embedding model—which converts text into numerical vectors—produces an average representation of multiple ideas, diluting the specificity of any single point. Conversely, when a chunk is too small, it loses the surrounding context necessary for the LLM to generate a coherent and accurate response.

The Chronology of RAG Development and the "Silent Failure" Trap

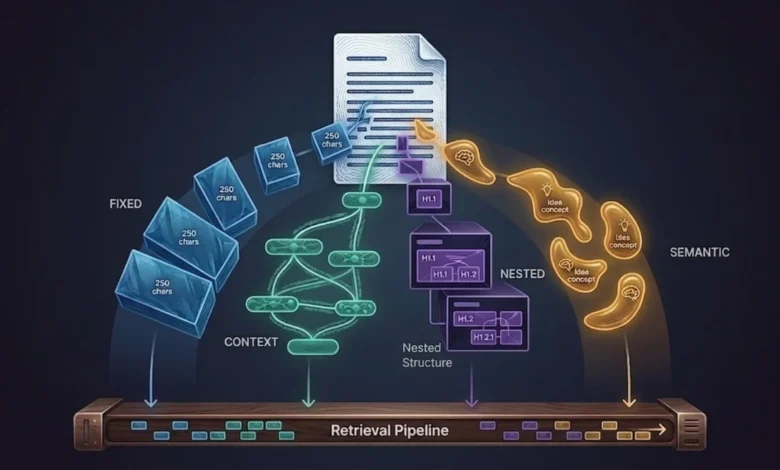

The evolution of RAG systems typically follows a predictable trajectory, beginning with the implementation of fixed-size chunking. In this initial stage, engineers often split documents into uniform windows, such as 512-token segments with a small overlap. This method is favored for its simplicity and speed, fitting neatly within the token limits of standard embedding models. However, this mechanical approach is indifferent to the semantic structure of the text. It frequently bifurcates numbered lists, separates headers from their supporting data, and splits logical arguments mid-sentence.

The primary danger of improper chunking is that it results in "silent failures." Unlike a software bug that triggers an error message, a retrieval failure produces an answer that appears plausible and fluent but is factually incomplete or subtly incorrect. In a production environment, these errors lead to a slow erosion of user trust. For an internal knowledge base, where accuracy is paramount for legal and operational reasons, a context recall rate of 0.72—meaning one in four queries misses a vital piece of information—is considered an unacceptable risk.

Advanced Strategies: From Sentence Windows to Hierarchical Structures

To address the limitations of fixed-size windows, technical teams are increasingly adopting more sophisticated parsing strategies. One such method is the Sentence Window approach. This strategy prioritizes precision during the retrieval phase while maintaining context during the generation phase. By indexing documents at the sentence level but storing a surrounding "window" of context (typically three sentences before and after) in the metadata, the system can identify the exact location of relevant information. When the retriever finds a specific sentence, a post-processor expands it back to its full window before passing it to the LLM.

In comparative testing, the transition from fixed-size chunking to sentence windowing has been shown to improve context recall from 0.72 to 0.88 for narrative documents, such as HR policies and onboarding guides. This improvement ensures that qualifying statements and exceptions remain tethered to the primary rules they modify.

However, narrative text represents only a portion of the enterprise data landscape. Technical documentation, such as architecture decision records and API specifications, often possesses a rigid, nested structure that requires a hierarchical approach. Hierarchical chunking creates a multi-level index: the system might index a full page, a 512-token section, and a 128-token paragraph simultaneously. At query time, an "auto-merging" retriever evaluates the results. If multiple sibling paragraphs from the same section are retrieved, the system automatically promotes the context to the parent section. This ensures that broad queries receive comprehensive section-level context, while specific queries receive targeted paragraph-level data.

The Complexity of Non-Textual Data: Tables, PDFs, and Slides

The most significant hurdle for enterprise RAG systems remains the processing of complex file formats. Standard PDF loaders often extract text in a raw character order that fails to account for multi-column layouts, sidebars, or headers. For documents with sophisticated layouts, engineers are moving toward layout-aware extraction tools like PyMuPDF (fitz). By grouping text by visual blocks rather than character sequences, these tools can reconstruct the correct reading order, preventing the interleaving of text from adjacent columns.

Tables represent another "black hole" for retrieval systems. When a two-dimensional table is flattened into a one-dimensional string of text, the relationship between headers and values is often lost. A naive extraction of a financial table might result in a string of numbers that the embedding model cannot interpret. A more robust solution involves reconstructing table rows into natural-language sentences at the indexing stage. For example, a row in a revenue table can be converted into a sentence: "In the EMEA region, Product A generated 4.2M in Q3 revenue with 12% year-over-year growth." This transformation makes the data both retrievable via semantic search and interpretable by the LLM.

Slide decks and image-heavy presentations pose a third challenge. In many corporate settings, the most critical architectural decisions are captured in diagrams rather than bullet points. For these documents, text extraction alone is insufficient. Emerging best practices suggest a dual-path approach: standard text extraction for text-heavy slides and multimodal processing—using models like GPT-4V or LLaVA—to generate prose descriptions of diagrams and screenshots. A common heuristic used by engineering teams is to route any slide with fewer than 30 words but containing an image to a multimodal processor, ensuring that visual insights are indexed alongside textual data.

Quantifying Performance: The Diagnostic Power of RAGAS

To move beyond anecdotal evidence and "vibe-based" testing, organizations are implementing standardized evaluation frameworks such as RAGAS (Retrieval-Augmented Generation Assessment). This framework provides four core metrics that serve as a diagnostic tool for the RAG pipeline:

- Context Recall: Measures the retriever’s ability to find all the necessary information. A low score here indicates a failure in the chunking or retrieval strategy.

- Context Precision: Measures the signal-to-noise ratio of the retrieved chunks. High precision means the retriever is not cluttering the LLM’s prompt with irrelevant data.

- Faithfulness: Measures whether the LLM’s answer is derived solely from the retrieved context. A low score suggests "hallucination" or a failure to ground the response in the provided data.

- Answer Relevancy: Measures how well the final response addresses the user’s query.

By monitoring these metrics, technical teams can isolate exactly where a system is failing. A high faithfulness score combined with low context recall, for instance, confirms that the LLM is working correctly but the chunking strategy is failing to provide it with the necessary facts.

Strategic Decision Framework for Enterprise AI

The consensus among AI architects is that there is no "one-size-fits-all" chunking strategy. Instead, the most resilient systems employ a routing logic based on document type. Structured technical specifications are best handled by hierarchical parsers; narrative policies benefit from sentence windows; and unstructured, mixed-format pages from platforms like Notion may require semantic chunking, where embedding models detect topic shifts to determine boundaries.

This modular approach requires tagging documents with metadata at the time of ingestion. By identifying a document as a "runbook," "contract," or "presentation" during the loading phase, the system can automatically apply the most effective chunking strategy for that specific format.

The broader implication for the industry is a shift in focus from the LLM itself to the data infrastructure that supports it. While the choice of model is important, it is rarely the primary bottleneck in a production environment. The true differentiator between a demo-ready prototype and a reliable enterprise tool is the precision of the chunking strategy. As organizations continue to integrate AI into their core operations, the ability to maintain the semantic integrity of data through thoughtful segmentation will remain the primary determinant of system accuracy and user trust. In the high-stakes world of corporate compliance and technical operations, the decision of where one chunk ends and the next begins is perhaps the most consequential design choice an engineer can make.