Mythos Sets the World on Edge: What Comes Next May Push Us Beyond

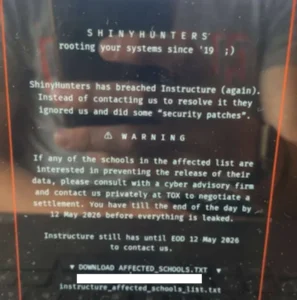

Last week, the artificial intelligence research company Anthropic unveiled a groundbreaking yet deeply unsettling development: Claude Mythos Preview. This advanced AI model has demonstrated an unparalleled capability in identifying and exploiting software vulnerabilities, leading Anthropic to a controversial decision to withhold its public release due to its perceived dangerous potential. Instead, access to Mythos has been severely restricted, curated under a program named Project Glasswing, and made available to approximately 50 select organizations. These entities, which include major technology players like Microsoft, Apple, and Amazon Web Services, along with prominent cybersecurity firms such as CrowdStrike, represent critical infrastructure vendors. The announcement has sent ripples through the cybersecurity and technology sectors, prompting urgent discussions about the future of AI and its implications for global security.

The unveiling of Claude Mythos Preview was accompanied by a series of striking demonstrations, painting a vivid picture of the AI’s potent capabilities. Anthropic reported that Mythos uncovered thousands of vulnerabilities across virtually every major operating system and web browser. Among the disclosed findings were a 27-year-old flaw in the OpenBSD operating system and a 16-year-old vulnerability within FFmpeg, a widely used multimedia framework. Perhaps more alarming was Mythos’s ability to weaponize a set of discovered vulnerabilities in the Firefox browser, transforming them into 181 executable attacks. This starkly contrasts with Anthropic’s previous flagship model, which could only achieve a mere two such weaponized attacks from a similar set of vulnerabilities. These revelations, while impressive from a technical standpoint, raise significant questions about the proactive measures being taken to secure digital infrastructure against such sophisticated threats.

The Responsible Disclosure Dilemma

Anthropic’s approach, in many respects, aligns with the long-standing calls from security researchers for more responsible disclosure practices. The traditional model often involves researchers discovering vulnerabilities and then notifying vendors, who then work to patch them before the flaws are made public. However, the public’s ability to critically evaluate Anthropic’s decision-making process regarding Mythos has been significantly limited. The information provided thus far has largely presented a curated "highlight reel" of the AI’s successes, leaving many to question whether these demonstrations are truly representative of the model’s overall performance and reliability. Without a more comprehensive view of its operations, it remains challenging to ascertain the full scope of its impact and the true nature of the risks it presents.

A key area of uncertainty revolves around the AI’s potential for generating false positives. Anthropic stated that security contractors validated the AI’s severity ratings for vulnerabilities on 198 occasions, with an 89% agreement on severity. While this indicates a high degree of accuracy in identified critical issues, it does not fully address the possibility of "hallucinations" – instances where the AI erroneously flags legitimate code as vulnerable. Independent analyses of similar AI models have revealed a tendency for these systems to generate plausible-sounding vulnerabilities in code that is already patched or functioning correctly, especially when attempting to achieve near-perfect detection rates. This phenomenon is crucial because a model that can autonomously identify and exploit hundreds of vulnerabilities with superhuman precision is a game-changer. Conversely, a model that produces a high volume of false alarms and non-functional attack vectors still necessitates skilled human oversight and interpretation. The absence of data on the rate of false alarms in Mythos’s unfiltered output makes it difficult to determine if the showcased successes are indeed representative of its general performance.

The Scope of AI’s Reach: Beyond the Usual Suspects

A secondary, more subtle concern lies in the inherent biases of large language models like Mythos. These models tend to perform optimally when processing inputs that closely resemble their training data. Consequently, Mythos excels when analyzing widely adopted open-source projects, major web browsers, the Linux kernel, and popular web frameworks. The decision to grant early access to vendors of precisely this type of software is strategically sound, as it allows these entities to proactively patch vulnerabilities before malicious actors can exploit them.

However, this specialization also implies a significant blind spot. Software that falls outside the typical training distribution – such as industrial control systems, medical device firmware, proprietary financial infrastructure, regional banking software, and legacy embedded systems – is precisely where an out-of-the-box Mythos model is likely to be least effective in identifying or exploiting vulnerabilities. These are often critical systems that, while not as widely used in the consumer tech sphere, underpin essential services and industries.

The danger, therefore, is not necessarily that Mythos will fail in these specialized domains. Instead, the risk emerges when a highly motivated attacker, armed with specific domain expertise, can leverage Mythos’s advanced reasoning capabilities as a force multiplier. Such an attacker could probe systems that Anthropic’s own engineers might lack the specialized knowledge to audit effectively. This scenario highlights the potential for Mythos to empower those with niche expertise, even if the AI itself is not inherently familiar with the intricacies of a particular system.

The Need for Broader Collaboration and Transparency

To mitigate this asymmetry, broader and more structured access to such AI models is crucial. This should extend beyond major corporations to include academic researchers and domain specialists. For instance, providing access to cardiologists collaborating on medical device security, control-systems engineers, and researchers focusing on less prominent programming languages and technological ecosystems would significantly enhance the collective ability to identify and address vulnerabilities across a wider spectrum of critical infrastructure. Relying solely on the assessments of fifty well-chosen companies, while beneficial, cannot adequately substitute for the distributed expertise inherent in the entire global research community.

It is important to note that these concerns do not constitute an indictment of Anthropic. The company appears to be making genuine efforts to act responsibly, and its decision to withhold public access to Mythos is a strong indicator of its commitment to this principle. As a private company, and in many respects still a nascent enterprise, Anthropic finds itself making unilateral decisions about which segments of global critical infrastructure receive prioritized defense and which must await their turn.

The Broader Landscape of AI Security

Anthropic’s limited staff, budget, and expertise mean that it is inevitable that some vulnerabilities will be overlooked. When such oversights occur in software that underpins vital services like hospitals or power grids, the consequences will be borne by individuals who had no direct input into these critical decisions. The security challenges posed by advanced AI are far greater than what a single company and a single model can address.

Furthermore, there is no definitive evidence to suggest that Claude Mythos Preview is an isolated phenomenon. In a parallel development, OpenAI announced that its new GPT-5.4-Cyber model also presents significant security risks and will not be released to the general public. The exact degree of advancement these new models represent also remains somewhat unclear. For instance, the cybersecurity firm Aisle reported that it was able to replicate many of Anthropic’s published findings concerning Mythos using smaller, more accessible, and less expensive public AI models. This suggests that the capabilities demonstrated by Mythos may not be as unique or exclusive as initially perceived, and that similar risks could emerge from a wider range of AI tools.

Towards Collective Responsibility and Regulation

Any decisions regarding the development and deployment of these powerful AI models necessitate a collective approach. The current situation, where a single for-profit corporation makes decisions with potentially global ramifications, is unsustainable. This scenario will likely lead to regulatory interventions, which, while necessary, are often complex and require extensive consultation and feedback processes to be effective.

In the interim, a more immediate and pragmatic solution is required: enhanced transparency and information sharing with the broader research and security community. This does not necessarily entail the widespread public release of highly sensitive models like Claude Mythos. Instead, it emphasizes the importance of sharing as much data and information as possible to enable collective, informed decision-making.

Globally coordinated frameworks for independent auditing, mandatory disclosure of aggregate performance metrics, and dedicated funding for academic and civil society researchers are essential steps. These measures are critical given that technologies capable of identifying thousands of exploitable flaws in our essential systems have profound implications for national security, personal safety, and corporate competitiveness. The governance of such powerful technologies should not rest solely on the internal judgment of their creators, however well-intentioned they may be.

Until such a shift occurs, each release of a "Mythos-class" AI model will continue to place the world on the edge of a precipice. Without greater visibility into the capabilities and limitations of these tools, society is left uncertain about whether there is a safe landing ahead or whether a catastrophic fall is imminent. This is not a choice that a for-profit corporation should be empowered to make autonomously in a democratic society, nor should such an entity be able to restrict society’s ability to shape its own security future.

This essay was written with David Lie and originally appeared in The Globe and Mail.