Bleeding Llama and Persistent Code Execution: Critical Vulnerabilities Uncovered in Ollama, Threatening Sensitive Data and System Integrity

Cybersecurity researchers have unveiled a series of critical security vulnerabilities affecting Ollama, a widely adopted open-source framework for running large language models (LLMs) locally. The discoveries highlight significant risks to users, ranging from sensitive data exfiltration to persistent code execution, underscoring the need for immediate patching and enhanced security practices. The vulnerabilities, collectively dubbed "Bleeding Llama" and others detailing persistent code execution on Windows, expose an estimated hundreds of thousands of servers globally to potential exploitation.

The "Bleeding Llama" Vulnerability: A Gateway to Memory Leakage

The most prominent of these discoveries, codenamed "Bleeding Llama," is a critical out-of-bounds read flaw identified as CVE-2026-7482, boasting a severe CVSS score of 9.1. This vulnerability, uncovered by researchers at Cyera, has the potential to allow a remote, unauthenticated attacker to gain access to the entire process memory of an affected Ollama server. The implications are far-reaching, as this memory could contain highly sensitive information, including API keys, proprietary code, confidential customer contracts, and even ongoing user conversation data.

Ollama’s popularity stems from its ability to democratize the use of powerful LLMs by enabling them to run on local hardware, bypassing the need for expensive cloud infrastructure. With over 171,000 stars and more than 16,100 forks on GitHub, the project has garnered a substantial user base. The "Bleeding Llama" vulnerability specifically resides within the GGUF model loader component of Ollama, affecting versions prior to 0.17.1.

The technical root of the issue lies in Ollama’s handling of GGUF (GPT-Generated Unified Format) files, a format designed for efficient local storage and execution of LLMs, analogous to formats like PyTorch’s .pt/.pth, safetensors, and ONNX. The vulnerability is triggered when the /api/create endpoint processes a specially crafted GGUF file. In this malicious file, the declared tensor offset and size are deliberately set to exceed the actual length of the file. During the quantization process, specifically within the fs/ggml/gguf.go and server/quantization.go (WriteTo()) functions, the server attempts to read data beyond its allocated heap buffer. This is exacerbated by Ollama’s use of the unsafe package in Go when creating models from GGUF files, which bypasses standard memory safety guarantees.

A hypothetical attack scenario involves an attacker crafting a GGUF file with an excessively large tensor shape. When this file is submitted to an exposed Ollama server via the /api/create endpoint, the out-of-bounds heap read is initiated. The sensitive data, such as environment variables, API keys, system prompts, and active user conversations, can then be exfiltrated. The attack chain is completed by uploading the compromised model artifact, containing the leaked data, through the /api/push endpoint to an attacker-controlled registry.

Dor Attias, a security researcher at Cyera, emphasized the potential impact: "An attacker can learn basically anything about the organization from your AI inference – API keys, proprietary code, customer contracts, and much more." He further noted that the risk is amplified when Ollama is integrated with other tools, as "all tool outputs flow to the Ollama server, get saved in the heap, and potentially end up in an attacker’s hands."

Timeline of Vulnerability Discovery and Disclosure

While the exact timeline of the initial discovery by Cyera is not publicly detailed, the vulnerability was officially disclosed in conjunction with the CVE assignment and the research publication.

- Prior to January 2026: The "Bleeding Llama" vulnerability existed in Ollama versions prior to 0.17.1.

- January 2026 (approximate): Cyera researchers identify and analyze the "Bleeding Llama" vulnerability.

- February 2026 (approximate): Cyera publishes their findings, codenaming the vulnerability "Bleeding Llama" and assigning it CVE-2026-7482.

- February 2026 onwards: Users are advised to update to Ollama version 0.17.1 or later and implement recommended security measures.

Broader Impact and Mitigation Strategies for "Bleeding Llama"

The widespread adoption of Ollama, particularly in development and research environments, means that a significant number of systems could be at risk. The ability for an unauthenticated attacker to gain such deep access to an LLM server’s memory raises serious concerns about data privacy and intellectual property.

To mitigate the "Bleeding Llama" vulnerability, users are strongly advised to:

- Update Ollama: Immediately upgrade to version 0.17.1 or any subsequent releases that include the fix.

- Network Access Control: Limit network access to Ollama instances, ensuring they are not unnecessarily exposed to the public internet.

- Auditing and Isolation: Regularly audit running instances for internet exposure and isolate them behind firewalls.

- Authentication Proxies: Deploy authentication proxies or API gateways in front of Ollama instances, as the REST API lacks built-in authentication.

Persistent Code Execution: A Double Threat on Windows

Adding to the security concerns, researchers at Striga have detailed two distinct vulnerabilities within Ollama’s Windows update mechanism that, when chained together, can lead to persistent code execution. These flaws, which remained unpatched following their disclosure on January 27, 2026, were made public after the expiration of a 90-day disclosure period.

These vulnerabilities specifically target the Windows desktop client, which by default, auto-starts on login and periodically checks for updates via the /api/update endpoint. The two core issues are:

- Path Traversal: This vulnerability allows an attacker to write files to arbitrary locations on the file system, including critical directories like the Windows Startup folder.

- Missing Signature Check: The update mechanism fails to adequately verify the digital signature of downloaded updates.

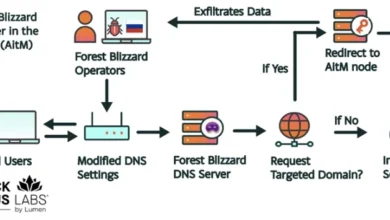

The attack chain requires an attacker to control an update server that the victim’s Ollama client can reach. By manipulating the OLLAMA_UPDATE_URL environment variable, an attacker can redirect the Ollama client to a compromised server. If the AutoUpdateEnabled setting is active (which is the default), the client will attempt to download an update.

Exploitation Scenario:

An attacker could craft a malicious executable and host it on a server that Ollama’s Windows client is configured to query for updates. The path traversal vulnerability allows the attacker to specify a destination for this executable that falls outside the normal update directory, such as the Windows Startup folder. Crucially, the missing signature check means that Ollama will proceed to write and execute this malicious file without verifying its authenticity.

Bartłomiej "Bartek" Dmitruk, co-founder of Striga, explained the impact: "The path traversal writes attacker-chosen executables into the Windows Startup folder. The missing signature verification keeps them there: the post-write cleanup that would remove unsigned files on a working updater is a no-op on Windows. On the next login, Windows runs whatever was left behind."

The consequences of this chained exploitation are severe. It enables persistent, silent code execution at the privilege level of the user running Ollama. This could allow attackers to deploy various malicious payloads, including:

- Reverse Shells: Gaining interactive command-line access to the compromised system.

- Info-Stealers: Exfiltrating sensitive data such as browser secrets, SSH keys, and other credentials.

- Droppers: Installing further malware or establishing more sophisticated persistence mechanisms.

Timeline of the Windows Update Vulnerabilities

- January 27, 2026: Researchers at Striga disclose two vulnerabilities in Ollama’s Windows update mechanism.

- January 27, 2026 – April 27, 2026: A 90-day disclosure period elapses with the vulnerabilities remaining unpatched.

- April 27, 2026 (approximate): Striga publishes their findings detailing the vulnerabilities and their potential for persistent code execution. CERT Polska takes over the coordinated disclosure process.

- Ongoing: Users are urged to apply recommended mitigation strategies until official patches are released.

Impact and Mitigation for Windows Update Flaws

The vulnerabilities affect Ollama for Windows versions ranging from 0.12.10 through 0.17.5 (and potentially up to 0.22.0, as noted by Dmitruk). The persistence achieved through this exploit chain is particularly concerning, as it ensures that malicious code executes every time the user logs into their Windows system. While removing the dropped binary from the Startup folder can stop the immediate persistence, the underlying vulnerabilities remain unaddressed in affected versions.

To mitigate these specific Windows update vulnerabilities, users are advised to:

- Disable Automatic Updates: Temporarily turn off the automatic update feature within Ollama for Windows.

- Remove Startup Folder Shortcuts: Manually delete any Ollama shortcuts from the Windows Startup folder (

%APPDATA%MicrosoftWindowsStart MenuProgramsStartup). This action prevents the silent on-login execution pathway. - Update Promptly: As soon as official patches are released by Ollama, users should update their installations to the latest secure version.

The discovery of these multiple critical vulnerabilities highlights a growing challenge in the rapidly evolving landscape of AI and machine learning technologies. As these tools become more integrated into daily workflows, their security posture becomes paramount. The Ollama vulnerabilities serve as a stark reminder that even popular and open-source projects can harbor significant security risks, necessitating a proactive and vigilant approach from both developers and users to ensure the integrity and security of sensitive data and systems. The cybersecurity community awaits further updates from Ollama regarding the patching of these critical issues.