inference

-

Artificial Intelligence

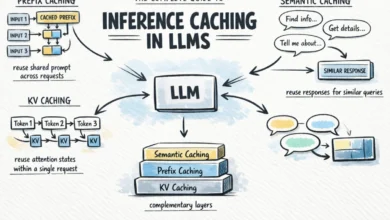

The Complete Guide to Inference Caching in Large Language Models Strategies for Optimizing Performance and Cost

As large language models (LLMs) transition from experimental novelties to the backbone of enterprise-grade applications, the twin challenges of high…

Read More »