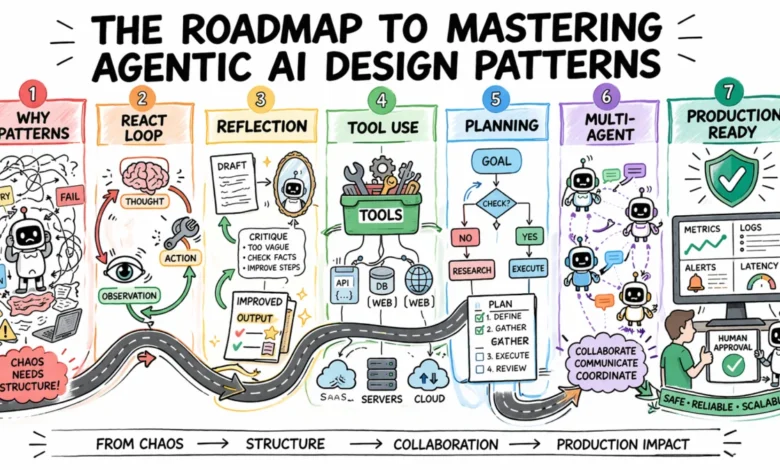

Mastering Agentic AI Design Patterns A Roadmap to Building Reliable and Scalable Systems

The rapid evolution of Large Language Models (LLMs) has transitioned from simple chat interfaces to autonomous "agentic" systems capable of reasoning, using tools, and executing multi-step workflows. However, as organizations move from experimental prototypes to production-grade deployments, they are encountering a significant hurdle: the unpredictability of agent behavior. To address this, a set of standardized agentic design patterns has emerged, providing a rigorous architectural framework to ensure that AI agents are not only capable but also reliable, debuggable, and scalable.

The Shift from Prompt Engineering to Architectural Design

In the early stages of generative AI adoption, developers primarily focused on prompt engineering—the art of refining input text to elicit better model responses. While effective for simple queries, this approach often fails in complex, multi-step environments. When an agent enters an infinite loop, hallucinates a tool call, or fails to recover from a minor error, the solution is rarely a "better prompt." Instead, the failure is usually structural.

Industry experts from Google Cloud, Amazon Web Services (AWS), and leading AI research labs have identified that the most robust agentic systems are built on repeatable architectural templates. These templates, known as design patterns, define how an agent reasons before acting, how it evaluates its own performance, and how it interacts with external systems. By adopting these patterns, developers can move away from "black-box" systems toward transparent, deterministic workflows where every decision point is visible and measurable.

The Chronology of Agentic Logic: From Chain-of-Thought to Autonomous Agents

The development of agentic design patterns has followed a rapid timeline of innovation over the last 24 months:

- Late 2022: Chain-of-Thought (CoT) Reasoning. Researchers discovered that asking a model to "think step-by-step" significantly improved its performance on logical tasks.

- Early 2023: The Birth of ReAct. The "Reasoning and Acting" (ReAct) framework was introduced, allowing models to alternate between internal thought processes and external tool interactions.

- Late 2023: Reflection and Self-Correction. Developers began implementing "critique loops" where a second model call is used to evaluate the first, reducing hallucination rates by up to 30% in coding and legal tasks.

- 2024: Multi-Agent Orchestration. The current frontier involves specialized agents—each with a unique persona and toolset—working together under a coordinator to solve enterprise-scale problems.

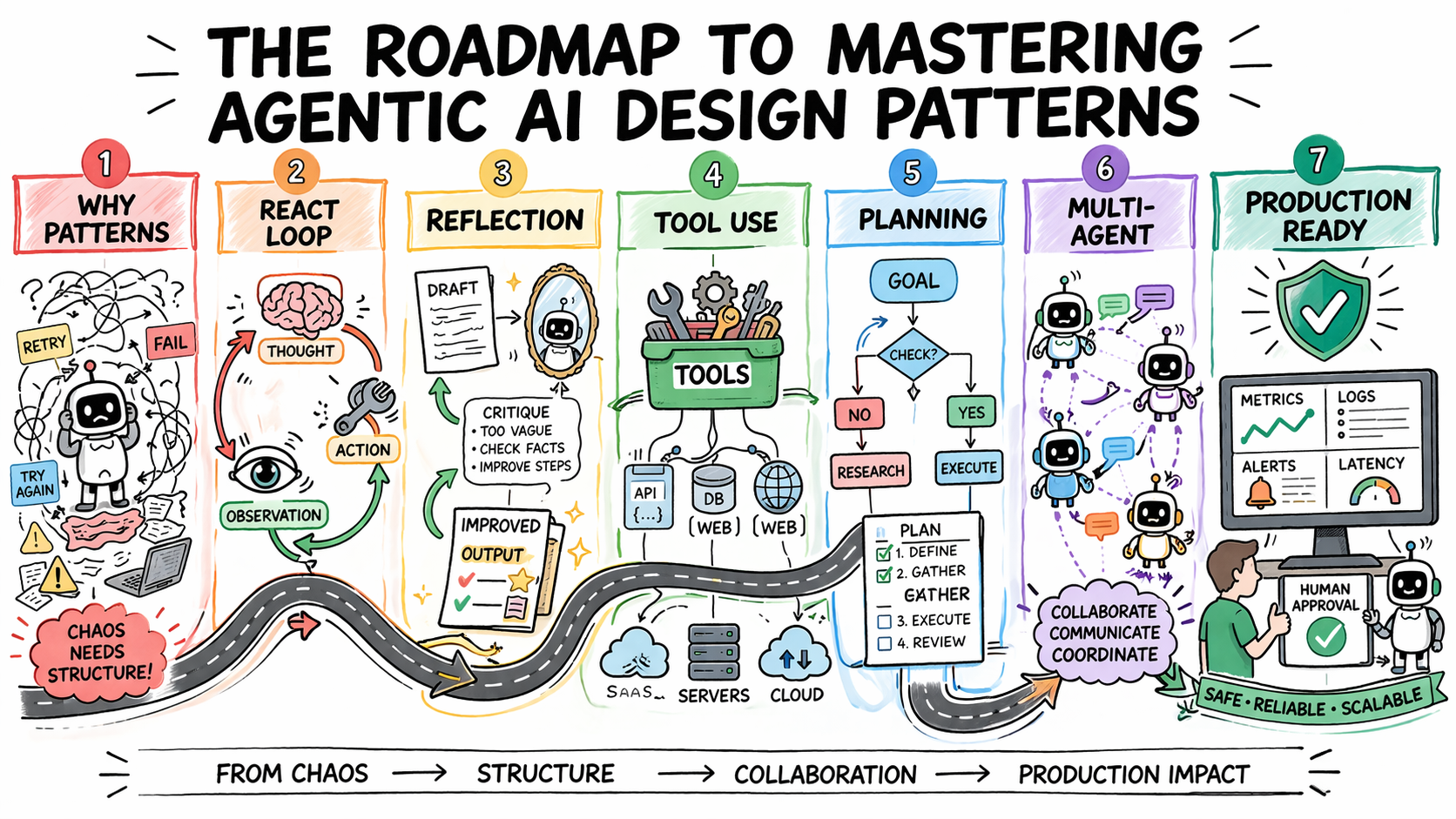

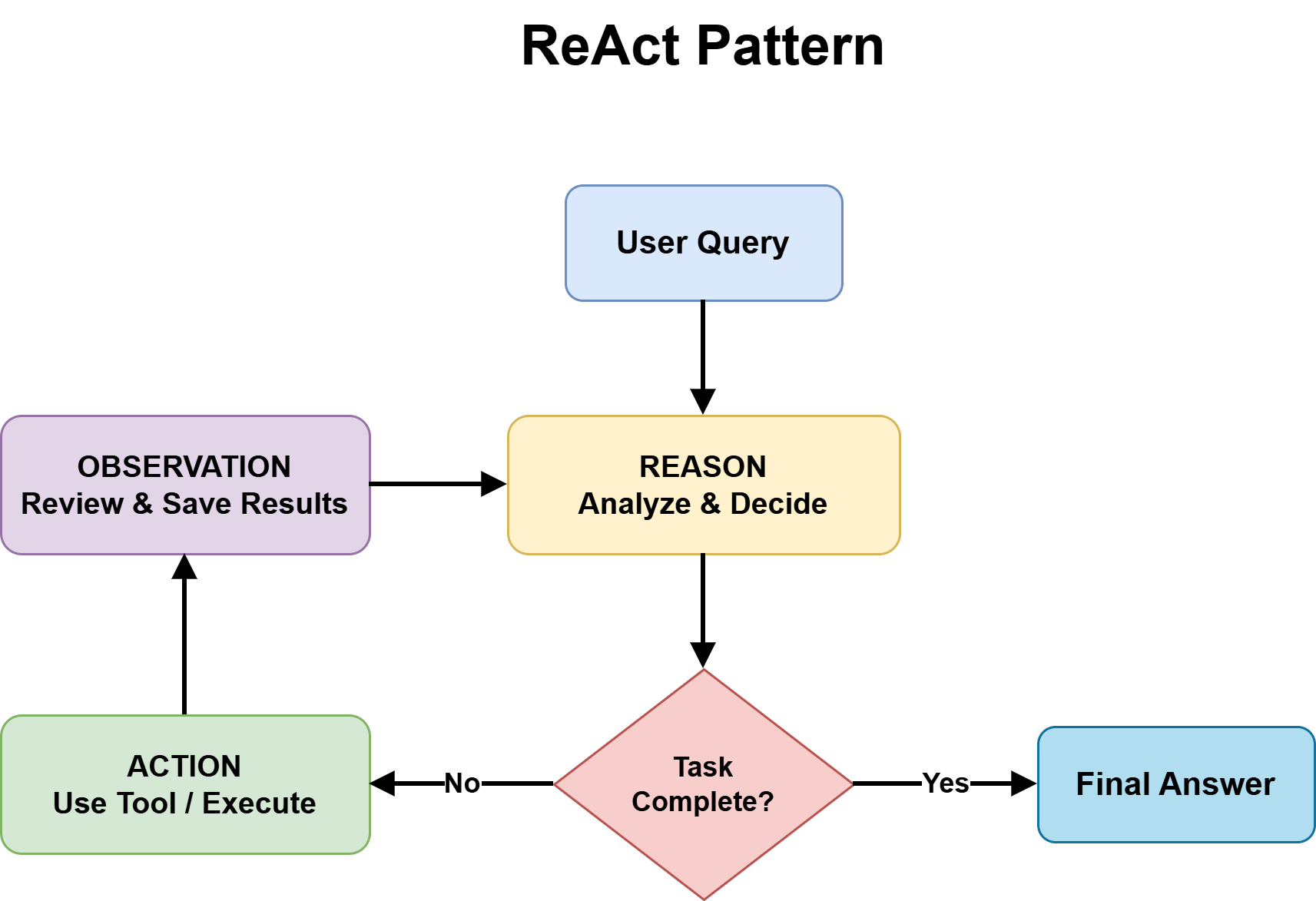

Foundational Pattern: The ReAct Framework

The ReAct pattern remains the industry standard for tasks where the solution path is not predetermined. It operates on a continuous feedback loop consisting of three distinct phases: Thought, Action, and Observation.

In the Thought phase, the agent articulates its reasoning based on the current state. In the Action phase, it selects and executes a tool, such as a database query or a web search. Finally, in the Observation phase, it consumes the output of that action and updates its internal state.

While powerful, ReAct carries significant trade-offs. Each iteration requires an additional model call, which increases both latency and token costs. Furthermore, without an explicit "iteration cap," agents can occasionally fall into "hallucination loops," where they repeatedly attempt the same failing action. Data from production environments suggest that for 80% of standard business queries, a simple fixed workflow is more cost-effective than a full ReAct loop, highlighting the importance of knowing when not to use complex patterns.

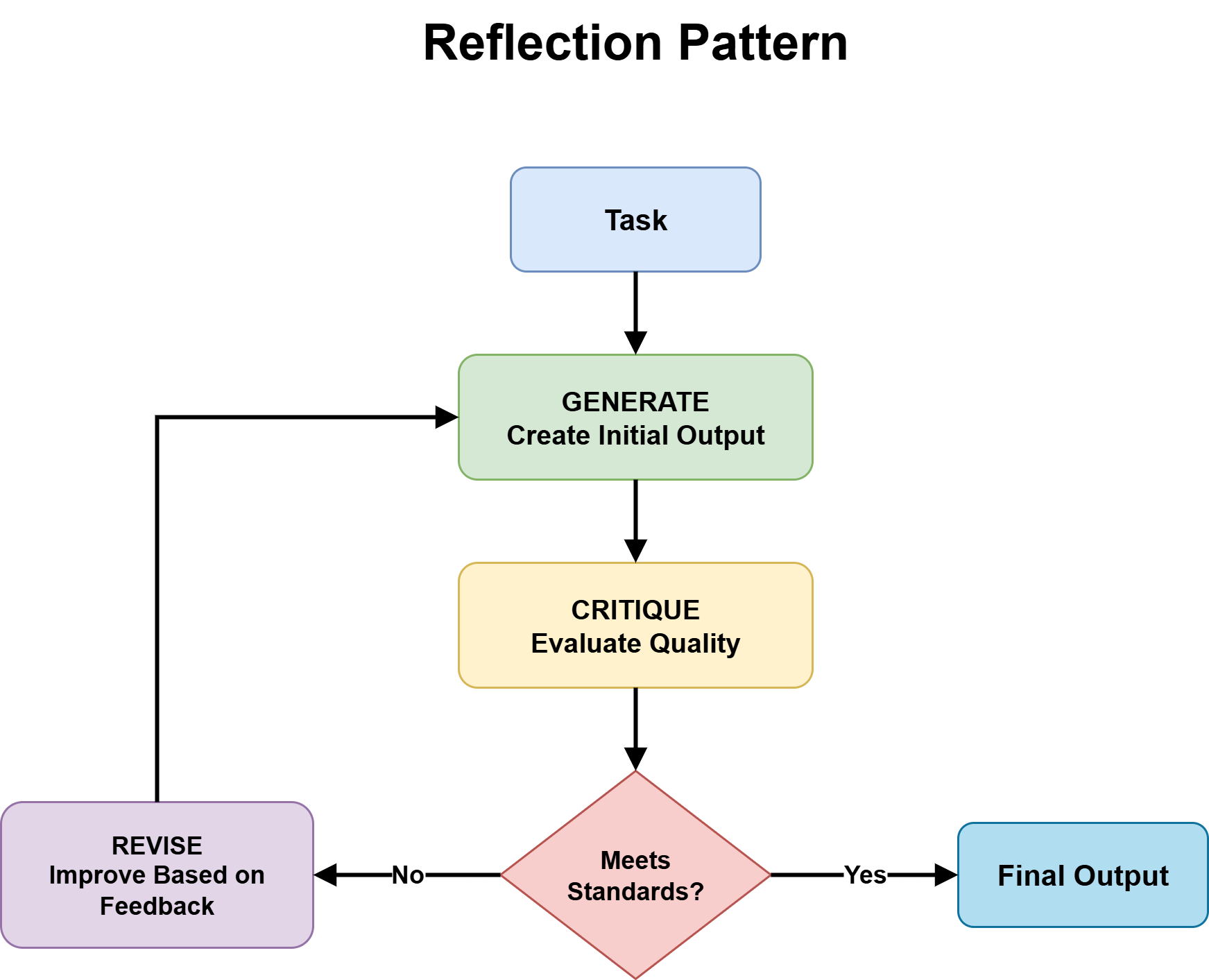

Enhancing Reliability through Reflection and Self-Correction

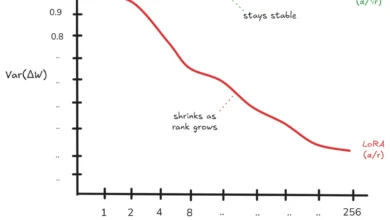

As accuracy requirements increase, particularly in regulated industries like finance or healthcare, the Reflection pattern has become essential. This pattern utilizes a "generation-critique-refinement" cycle. An agent produces an initial draft, which is then scrutinized by a "critic" agent—ideally a more capable model or one with a specialized system prompt.

This pattern is especially effective when paired with deterministic verification tools. For example, a code-generation agent might produce a Python script, which is then passed to a linter or a unit test suite. The feedback from these tools provides grounded, non-hallucinated data that the agent uses to refine its output. According to recent benchmarks, implementing a single round of reflection can improve code correctness scores by nearly 20% compared to single-pass generation.

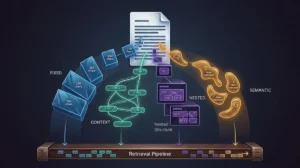

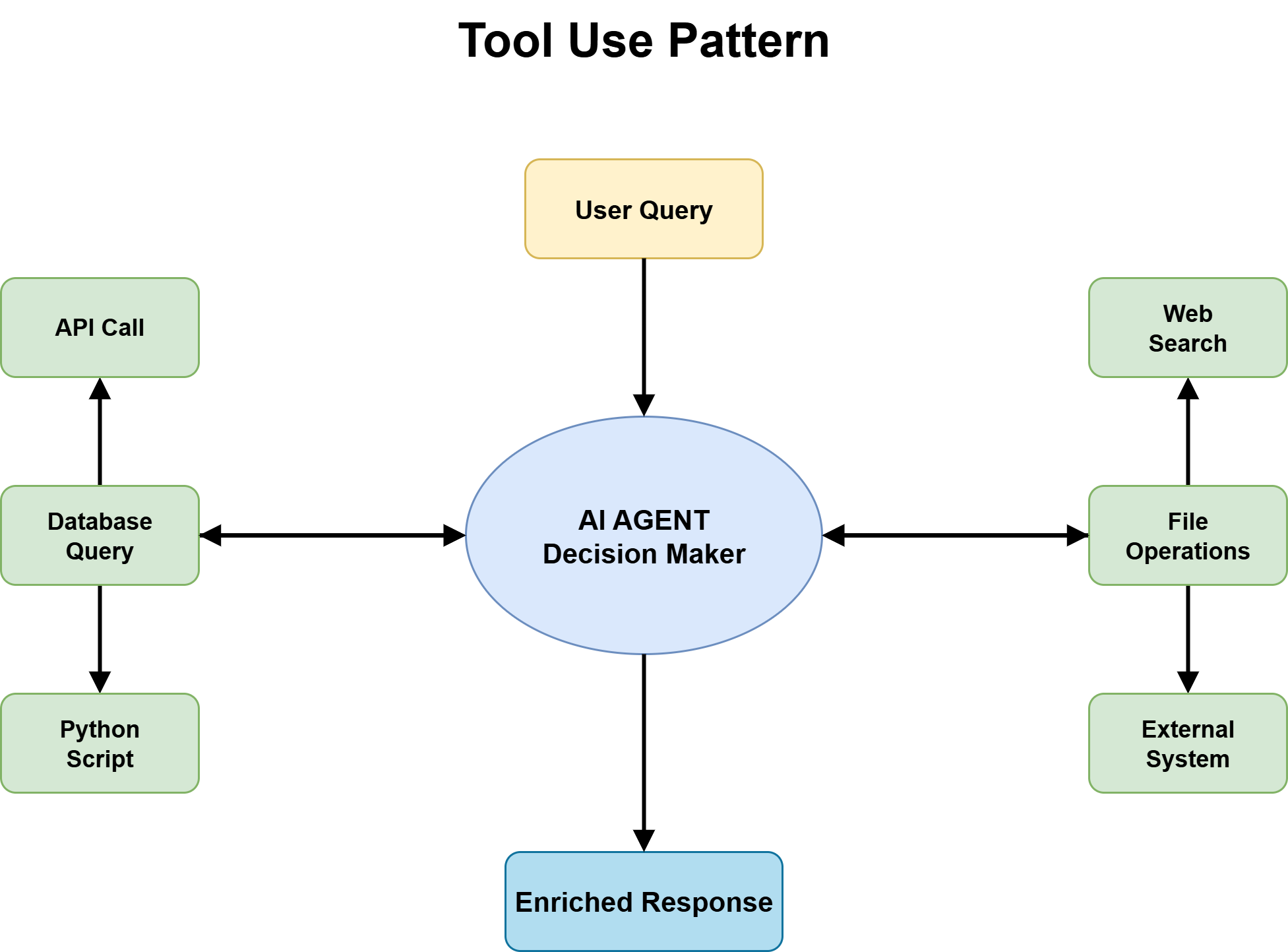

Tool Use as a First-Class Architectural Decision

The true utility of an agent lies in its ability to interact with the real world through tool use. However, connecting an LLM to an enterprise API or a production database introduces substantial security and reliability risks.

Modern design patterns treat tool use as a structured architectural layer. This involves:

- Strict Schemas: Tools must have clearly defined input and output parameters. Ambiguity in tool descriptions is a leading cause of agent failure.

- Graceful Degradation: When an external API is down or rate-limited, the agent must be programmed with "fallback" behaviors rather than simply crashing or hallucinating a response.

- The Blast Radius: For high-risk actions, such as moving funds or deleting data, design patterns now mandate "Human-in-the-Loop" (HITL) approval gates.

Strategic Planning and Complex Task Management

For tasks spanning dozens of steps, the ad-hoc reasoning of ReAct is often insufficient. This is where the Planning pattern is applied. Before taking any action, the agent decomposes a high-level goal into a structured plan or a Directed Acyclic Graph (DAG) of subtasks.

There are two primary approaches to planning:

- Static Planning: The agent generates a complete plan upfront and executes it sequentially. This is ideal for well-understood workflows like data migration.

- Dynamic Planning: The agent generates an initial plan but re-evaluates and modifies it after every step. This is necessary for volatile environments like real-time market research.

Planning reduces the "cognitive load" on the model during execution, as the agent only needs to focus on one subtask at a time rather than keeping the entire complex goal in its short-term context window.

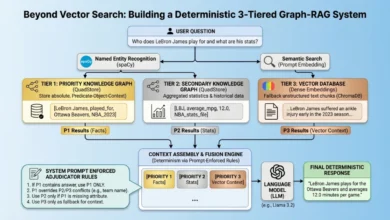

The Multi-Agent Frontier: Orchestration and Collaboration

In enterprise settings, a single "generalist" agent often becomes unwieldy. The Multi-Agent pattern solves this by distributing work across specialized "workers." For instance, a software development workflow might include a "Product Manager Agent" to define requirements, a "Coder Agent" to write logic, and a "QA Agent" to find bugs.

A central "Coordinator" or "Router" manages the hand-offs between these agents. This modularity allows developers to swap out or upgrade individual agents without rebuilding the entire system. However, multi-agent systems introduce "coordination overhead." If not managed correctly, agents can pass incorrect information back and forth, leading to "cascading failures."

Safety, Evaluation, and the Human-in-the-Loop Paradigm

The final step in the roadmap is ensuring production safety. Organizations are increasingly adopting frameworks like the OWASP Top 10 for LLM Applications to guard against prompt injection and data exfiltration.

Reliability is managed through "Traceability." Modern orchestration frameworks like LangGraph, AutoGen, and CrewAI allow developers to record every step of an agent’s reasoning process. If a failure occurs, engineers can "replay" the trace to identify exactly where the logic deviated.

Furthermore, industry leaders emphasize that human oversight should be treated as a design pattern rather than a temporary fix. "Human-in-the-loop" workflows ensure that agents handle routine, low-risk tasks autonomously while escalating nuanced or high-stakes decisions to human experts. This hybrid approach maximizes efficiency while maintaining accountability.

Broader Implications for the Tech Industry

The shift toward agentic design patterns represents a maturation of the AI industry. As these patterns become standardized, we can expect a significant increase in the deployment of autonomous systems across the global economy.

Market analysts project that the market for AI agents will grow at a compound annual growth rate (CAGR) of over 30% through 2030. The organizations that succeed will be those that view AI not as a magical oracle, but as a complex software system that requires the same architectural rigor, testing, and patterns as any other enterprise technology. By following this roadmap—starting with simple reasoning loops and scaling to sophisticated multi-agent systems—developers can build the next generation of resilient, intelligent infrastructure.