Optimizing Data Science Workflows Through AI-Driven Skills and the Model Context Protocol

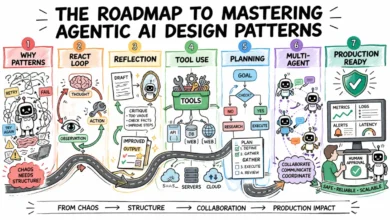

The landscape of artificial intelligence in data science is shifting from simple code generation toward comprehensive, automated workflows enabled by modular instruction sets known as skills and the integration of the Model Context Protocol. As Large Language Models (LLMs) like Claude and GPT-4 become more deeply integrated into professional environments, the methodology of interacting with these models is evolving. Rather than relying on monolithic prompts or manual copy-pasting of code, practitioners are increasingly adopting "skills"—reusable, structured packages of instructions that allow AI agents to handle recurring tasks with higher reliability and consistency. This shift represents a move toward "agentic" data science, where the AI is not just a chatbot but a specialized tool capable of executing complex, multi-step processes with minimal human intervention.

The Architecture of AI Skills and Context Management

A skill, in the context of modern AI development environments such as Claude Code or Codex, is defined as a reusable package of instructions and optional supporting files designed to standardize recurring workflows. The technical foundation of a skill is the SKILL.md file, which contains essential metadata, including the skill’s name and description, alongside detailed operational instructions. These packages are frequently bundled with auxiliary scripts, templates, and reference examples to ensure that the AI maintains accuracy across different sessions.

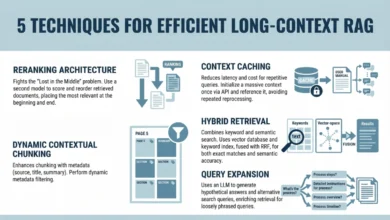

The primary advantage of using skills over traditional long-form prompting lies in context management. In standard LLM interactions, providing a massive amount of background information—such as corporate style guides, specific library requirements, or complex logic—consumes the model’s "context window." This can lead to increased latency, higher costs, and a phenomenon known as "lost in the middle," where the model fails to recall instructions placed in the center of a long prompt. Skills mitigate this by allowing the AI to load only lightweight metadata initially. The model then "decides" to read the full instruction set and resources only when it identifies the skill as relevant to the user’s current task. This on-demand resource loading optimizes performance and ensures the AI remains focused on the specific requirements of the workflow at hand.

Case Study: Automating the Weekly Visualization Workflow

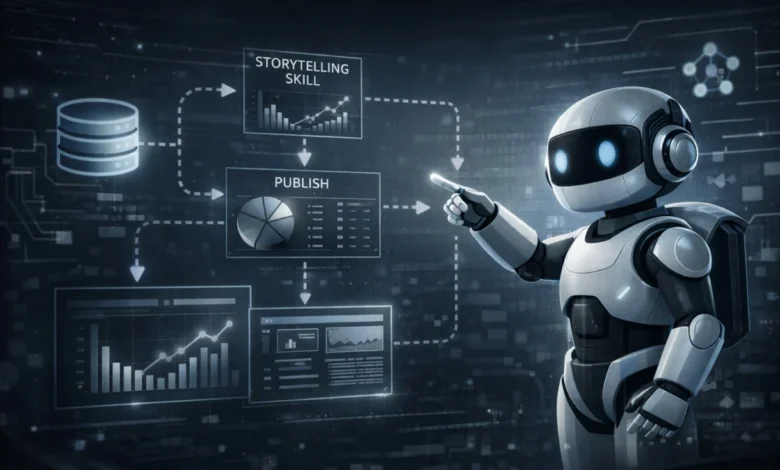

To understand the practical application of these technologies, one may examine the long-term project of a data scientist who has produced one data visualization every week since 2018. For over six years, this practitioner followed a manual routine that typically required one hour per week to complete. The workflow traditionally consisted of four distinct phases: searching for a compelling dataset, performing exploratory data analysis (EDA) to identify a "story," generating the visualization using tools like Tableau or Python, and finally publishing the results with accompanying insights and data citations.

The introduction of AI skills has allowed for the automation of approximately 80% of this process. By creating a specific "storytelling-viz" skill, the practitioner reduced the time required for steps two through four from nearly an hour to less than ten minutes. This transformation was achieved by integrating the skill with the Model Context Protocol (MCP), an open standard that allows LLMs to securely and efficiently connect to external data sources. In a recent test using an Apple Health dataset stored in Google BigQuery, the AI was able to query the database, identify an insight regarding the correlation between annual exercise time and calories burned, and recommend a specific chart type with a detailed rationale for its selection.

Chronology of the Transformation

The transition from a manual to an AI-augmented workflow followed a structured timeline of development:

- 2018 – 2024 (The Manual Era): The practitioner focused on manual tool mastery, primarily using Tableau to sharpen data intuition and storytelling capabilities.

- Early 2025 (The Planning Phase): Recognizing the repetitive nature of the task, the practitioner began defining the requirements for an AI-driven automation strategy, focusing on a tech stack that included Claude Code and Codex.

- The Bootstrapping Phase: Using AI to "create a skill to create a skill," the initial version of the

SKILL.mdfile was generated. This version provided basic visualization capabilities but lacked the nuances of professional design. - The Iterative Refinement Phase: Over several weeks, the skill was tested against more than 15 diverse datasets. This phase involved feeding the AI eight years of personal visualization history and external design principles to refine the output style.

- Integration of MCP: By connecting the visualization skill to BigQuery via MCP, the workflow became fully end-to-end, moving from raw database queries to polished, insight-driven visualizations in a single command.

Technical Implementation and Iterative Improvement

Building a robust AI skill is not a one-time prompt engineering task but an iterative engineering process. The development of the "storytelling-viz" skill illustrates the "10/90 rule" of AI implementation: while the initial 10% of the work—creating a functional script—can be done in minutes, the remaining 90% of the work involves refining the tool to meet professional standards of consistency and quality.

Strategies for Skill Enhancement

To bridge the gap between a generic AI output and a high-quality data product, several strategies were employed:

- Incorporating Domain Knowledge: The practitioner shared historical visualization screenshots and specific style guidance with the AI. The model then summarized these common principles (e.g., preferred color palettes, font choices, and layout structures) and updated the skill instructions to reflect these preferences.

- External Resource Integration: The AI was tasked with researching established data visualization best practices from renowned sources and analyzing similar public skills available on platforms like skills.sh. This added a layer of industry-standard robustness that the practitioner had not explicitly documented.

- Test-Driven Refinement: By observing how the skill handled different data types—such as time-series data versus categorical data—the practitioner identified specific failure points. This led to explicit instruction updates, such as mandating the inclusion of data sources at the bottom of charts, using specific clean fonts (e.g., "Arial" or "Helvetica"), and ensuring that every visualization led with an "insight-driven" headline rather than a generic descriptive title.

Performance Data and Comparative Analysis

The impact of shifting to a skill-based workflow is quantifiable. In traditional manual workflows, the "time to insight" is often bottlenecked by the technical overhead of data cleaning and chart formatting.

| Metric | Manual Workflow (Pre-AI) | AI-Skill Augmented Workflow |

|---|---|---|

| Total Time per Week | ~60 Minutes | <10 Minutes |

| Data Querying | Manual SQL/Export | Automated via MCP |

| Insight Identification | Manual EDA | AI-Driven Storytelling |

| Formatting/Styling | Manual Adjustment | Standardized via SKILL.md |

| Consistency | Variable | High (Template-based) |

The data indicates a significant reduction in labor-intensive tasks, allowing the human practitioner to focus on the "discovery" aspect of data science rather than the mechanical aspects of visualization.

Industry Implications for Data Scientists

The emergence of skills and MCP signals a broader trend in the data science industry: the shift from "full-stack" manual coding to "orchestration." For data scientists, the value proposition is moving away from the ability to write boilerplate code and toward the ability to design and manage the processes that generate that code.

When to Implement Skills

Industry analysts suggest that skills are most effective in workflows that meet four criteria:

- Recurrence: The task occurs on a regular schedule (daily, weekly, or monthly).

- Semi-Structured Nature: The process follows a consistent logic but requires different inputs each time.

- Domain Dependence: The output must adhere to specific organizational or professional standards.

- Complexity: The task is too multifaceted to be handled by a single, simple prompt.

Furthermore, the modularity of skills allows for a "microservices" approach to data science. By splitting a workflow into independent components—such as one skill for data cleaning, one for visualization, and one for automated reporting—teams can build a library of interchangeable assets that can be reused across different departments and projects.

Conclusion and Future Outlook

The integration of MCP and modular skills represents a milestone in the maturity of AI tools for data professionals. While the technical capabilities of LLMs continue to expand, the focus is increasingly on how to wrap those capabilities in a way that is reliable, scalable, and tailored to specific user needs.

Despite the high level of automation now possible, the human element remains central to the process. As noted in the weekly visualization project, the goal of automation is not necessarily to replace the practitioner but to remove the friction of the "tool" so that the "process of discovery" can take precedence. In the era of AI, data intuition and the ability to ask the right questions remain the most critical skills a data scientist can possess. The automation of the "how" through AI skills simply provides more space for the "why" and the "what’s next." As these tools continue to evolve, the ability to build, refine, and share skills will likely become a core competency for data professionals worldwide.