Decoding the Invisible Architecture Six Technical Pillars Defining Modern Large Language Model Optimization and Scalability

The rapid proliferation of Large Language Models (LLMs) has transformed global computing, shifting the focus from simple algorithmic processing to the deployment of massive, multi-billion parameter neural networks. While the public primarily interacts with these models through polished application programming interfaces (APIs) and chat interfaces, a deeper architectural evolution is occurring beneath the surface. For engineers and researchers, the transition from utilizing black-box models to building and fine-tuning custom architectures requires a granular understanding of non-obvious design choices. These decisions—ranging from positional embedding strategies to memory management during inference—directly determine the speed, cost, and functional capabilities of modern AI systems. Recent technical audits of the GPT-2 architecture, implemented from the ground up using frameworks like PyTorch, have highlighted six critical architectural pillars that separate legacy systems from the high-efficiency models currently dominating the industry.

The Evolution of Parameter Efficiency: From LoRA to Rank Stabilization

The history of fine-tuning large-scale models is a history of managing the "curse of dimensionality." Traditional fine-tuning required updating every weight in a model, a process that is computationally prohibitive for models with 70 billion or more parameters. The introduction of Low-Rank Adaptation (LoRA) in 2021 by Microsoft researchers marked a paradigm shift. By freezing the original weights (W) and only training two smaller, low-rank matrices (A and B), developers could reduce the number of trainable parameters by over 99%. In practical implementations, such as those modeled on GPT-2, this can mean training only 0.18% of the total weights while maintaining comparable performance.

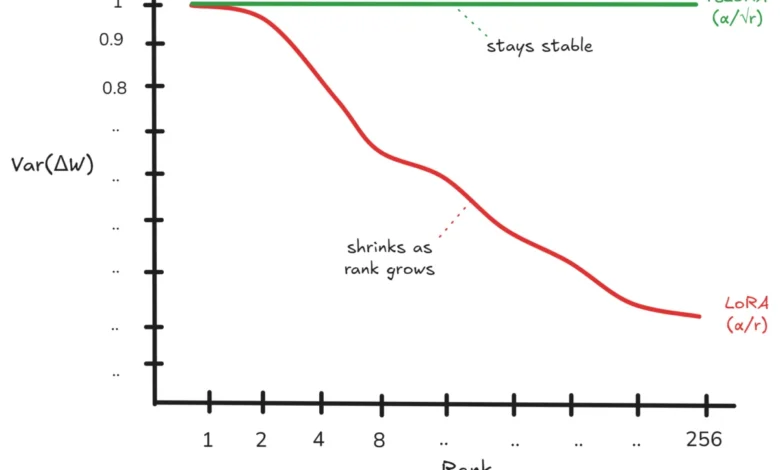

However, as the industry moved toward higher-rank adaptations to capture more complex task nuances, a fundamental flaw in the original LoRA formula was identified. The standard LoRA approach uses a scaling factor of $alpha/r$ (alpha divided by rank) to integrate fine-tuned weights. Mathematical analysis and empirical testing have shown that as the rank ($r$) increases, the variance of the weight updates decreases. Specifically, the variance of the product of the low-rank matrices ($B times A$) is proportional to $r$. When this is multiplied by the scaling factor squared ($(alpha/r)^2$), the resulting variance of the weight update ($Delta W$) becomes proportional to $1/r$.

This inverse relationship means that as a developer attempts to make the model "smarter" by increasing the rank, the individual weight updates actually shrink, rendering the fine-tuning process less effective. To counter this, the industry is shifting toward Rank-Stabilized LoRA (RsLoRA). By replacing the scaling factor with $alpha/sqrtr$, the variance remains constant regardless of the rank. This allows for stable learning even at high ranks, a critical requirement for enterprise-grade models that must learn highly specialized domain knowledge without losing the foundational capabilities of the base model.

Positional Embeddings: The Shift to Rotary Dynamics

A significant challenge in Transformer architecture is the model’s inherent "position-agnostic" nature; without a specific mechanism, a Transformer views a sentence as a "bag of words" rather than a sequential string. The chronology of solving this problem reflects the rapid maturation of the field. The original 2017 "Attention Is All You Need" paper utilized Sinusoidal Positional Embeddings, a fixed mathematical formula that added positional data directly to token embeddings. While parameter-free, this method was rigid and often distorted the original token information.

GPT-2 and GPT-3 evolved this by using Learned Positional Embeddings, where the model "learned" positions through backpropagation. While more flexible, this added a significant parameter load—calculated as context size multiplied by the embedding dimension—and continued the problematic practice of direct addition to token embeddings.

The current industry standard has converged on Rotary Positional Embeddings (RoPE). Introduced in the RoFormer paper and popularized by models like Llama and Mistral, RoPE encodes position by rotating the Query (Q) and Key (K) matrices in a high-dimensional space. This method offers two distinct advantages: it requires zero additional parameters and it leaves the original token embeddings untouched. By utilizing rotation rather than addition, RoPE preserves the integrity of the token information while allowing the model to naturally handle relative distances between words. This architectural choice is largely credited with enabling the "long-context" revolution, allowing models to process hundreds of thousands of tokens without losing structural coherence.

Scaling Laws and the Disappearance of Weight Tying

In the early era of Large Language Models, weight tying was a ubiquitous optimization technique. Used in GPT, GPT-2, and BERT, weight tying involves sharing the same weights between the input embedding layer and the output projection head. The logic was grounded in the symmetry of language: if an embedding maps a token to a vector, the output head should logically map that vector back to a token.

For a 124-million parameter model like the original GPT-2, weight tying saved approximately 38 million parameters—nearly 30% of the model’s footprint. This was a vital optimization for hardware with limited VRAM. However, as models scaled into the billions of parameters, the 38 million parameters saved became statistically insignificant, representing less than 0.5% of the total weight count.

Contemporary models like Llama, Falcon, and Mistral have largely abandoned weight tying. Removing this constraint allows the output head to specialize independently from the input embeddings, leading to better performance in complex generation tasks. The industry has effectively traded a negligible amount of memory for increased representational power, signaling a shift in priority from raw parameter count to functional specialization.

Training Stability: The Pre-LayerNorm vs. Post-LayerNorm Tug-of-War

The placement of Layer Normalization (LN) within a Transformer block is a decision that balances training stability against final model performance. The original Transformer used "Post-LN," where normalization occurs after the residual connection addition. While Post-LN models can theoretically reach higher accuracy, they are notoriously difficult to train, often suffering from vanishing or exploding gradients that cause training runs to fail after weeks of expensive compute.

To address this, the industry transitioned to "Pre-LN" starting with GPT-2. In this configuration, normalization happens inside the residual block before the attention or feed-forward layers. This change ensures a much smoother gradient flow, allowing for the training of much deeper networks without the need for complex "warm-up" schedules. While some researchers argue that Pre-LN slightly limits the model’s ultimate representational capacity, the trade-off for training reliability is considered essential in a production environment where a single training failure can cost millions of dollars in wasted GPU time.

The Memory Wall and the KV-Cache Revolution

As LLMs are deployed for real-time inference, the primary bottleneck has shifted from raw computation to memory bandwidth. During the autoregressive generation process, where the model predicts one token at a time, the standard attention mechanism requires recomputing the Key (K) and Value (V) matrices for every previous token at every step. This results in a time complexity of $O(T^2)$, where $T$ is the sequence length.

The implementation of a KV-Cache has become a non-negotiable requirement for modern inference engines. By caching the K and V matrices of previous tokens, the model only needs to compute the K and V for the single new token being generated, reducing time complexity to $O(T)$. In practice, this can provide a 15x reduction in attention-related compute for a short sequence, and even greater gains for longer contexts.

However, the KV-Cache is not a "free" optimization. It consumes significant memory proportional to the number of layers, the sequence length, and the model dimension. In many enterprise settings, the size of the KV-Cache—rather than the model weights themselves—is what limits the number of concurrent users a single GPU can support. This "memory wall" has spurred new research into compression. Recent breakthroughs, such as Google Research’s "TurboQuant" (2025/2026), have demonstrated that the KV-Cache can be compressed to as little as 3 bits per value using online vector quantization and Lloyd-Max techniques. These advancements allow for a 5x to 6x reduction in memory consumption, enabling massive context windows on consumer-grade hardware.

Strategic Quantization: Why LayerNorm Remains Untouched

Quantization—the process of converting 32-bit floating-point weights (FP32) to 8-bit (INT8) or 4-bit integers—is the primary method for deploying LLMs on edge devices. However, a sophisticated architectural implementation does not quantize all layers equally. A notable observation in high-performance models is that LayerNorm is almost always excluded from INT8 quantization.

The reasoning is twofold. First, LayerNorm contains a very small fraction of the model’s total parameters; quantizing it provides negligible memory savings. Second, LayerNorm is highly sensitive to precision. It handles the "outliers" in the neural network’s activations—values that are significantly larger than the mean. Reducing the precision of LayerNorm can lead to significant "quantization error," which cascades through the network and degrades the quality of the generated text. By keeping LayerNorm in full precision (FP32 or FP16) while quantizing the massive weight matrices, engineers achieve the best of both worlds: a significantly smaller model footprint with almost no loss in accuracy.

Broader Impact and Industry Implications

The transition from viewing LLMs as monolithic entities to understanding these six architectural nuances has profound implications for the AI industry. As the cost of compute continues to rise, the ability to optimize models through techniques like RsLoRA and KV-Cache compression determines the economic viability of AI startups.

Furthermore, these architectural choices reflect a broader trend toward "efficiency-first" AI. The disappearance of weight tying and the shift to Pre-LN demonstrate a field that is maturing, moving away from theoretical maximums toward practical, stable, and scalable solutions. For organizations building their own models, the lesson is clear: the most important design choices are often those that happen "under the hood," invisible to the user but critical to the model’s long-term success. As the industry moves toward 2026 and beyond, the focus will likely shift further toward managing the memory wall, with quantization and cache optimization remaining the primary frontiers of innovation.