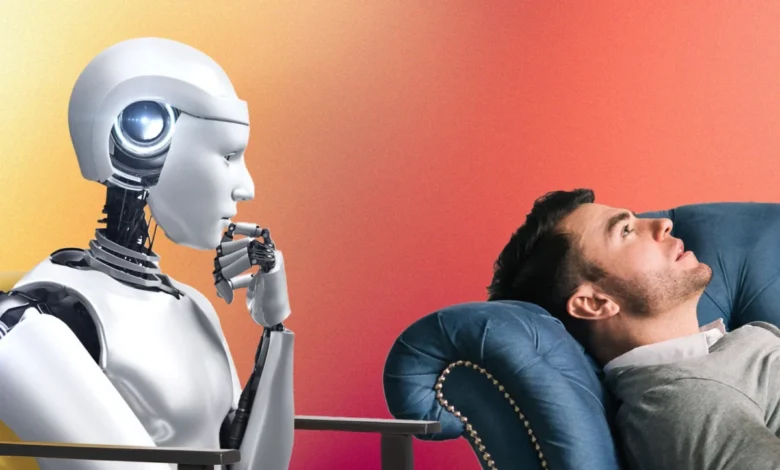

AI Chatbots Emerge as Unconventional Mental Health Support Amidst Growing Accessibility Concerns

Kyla, a 19-year-old from Berkeley, California, discovered a surprising new avenue for mental health support: Artificial Intelligence. Initially drawn to the conversational capabilities of AI language models like ChatGPT, she found the interactions remarkably akin to speaking with a human, even echoing the supportive nature of therapy sessions. For Kyla, who cited a lack of time and financial resources as significant barriers to traditional therapy, this AI offered an accessible and immediate outlet. "I enjoyed that I could trauma dump on ChatGPT anytime and anywhere, for free, and I would receive an unbiased response in return along with advice on how to progress with my situation," she shared with BuzzFeed News, highlighting the platform’s appeal for processing difficult emotions and navigating life events, such as a recent breakup.

The AI program, before responding to user input, consistently includes a disclaimer: "As an AI language model, I am not a licensed therapist, and I am unable to provide therapy or diagnose any conditions. However, I am here to listen and help in any way I can." This acknowledgment, while explicit, did not deter Kyla, who viewed the AI’s function as precisely what she sought: an unbiased, readily available sounding board. She elaborated, "I often feel better after using online tools for therapy, and it certainly aids my mental and emotional health. I enjoy being able to unload my thoughts on ChatGPT, and would consider this an improvement from journaling because I am able to receive feedback on my thoughts and situation." Her experience is not isolated; the hashtags #ChatGPT and #AI boast a combined 24.2 billion views on TikTok, with dedicated communities like #CharacterAITherapy (6.9 billion views) showcasing individuals using specialized AI programs for their talk therapy needs.

The Promise of Accessible Mental Healthcare Through AI

The growing reliance on AI for mental health support stems from a critical and persistent gap in traditional mental healthcare services. A 2021 study published in SSM Population Health, analyzing data from over 50,000 adults, revealed that a staggering 95.6% of individuals reported at least one barrier to accessing healthcare. For those facing mental health challenges, these barriers are often amplified, encompassing prohibitive costs, a shortage of qualified professionals, and the pervasive stigma associated with seeking help. Furthermore, a 2017 study highlighted that individuals of color disproportionately encounter healthcare roadblocks due to racial and ethnic disparities, including heightened mental health stigma, language barriers, discrimination, and inadequate health insurance coverage.

It is within this landscape of unmet needs that AI technologies present a compelling potential solution. Lauren Brendle, a 28-year-old programmer and former mental health counselor, developed Em x Archii, a free, nonprofit AI therapy program built upon ChatGPT’s architecture. "Mental health resources is a huge barrier for people, which I think AI has the potential to help with," Brendle stated. Her motivation stemmed from her three years working at a suicide hotline, where she witnessed firsthand the systemic challenges individuals faced in obtaining timely and affordable mental health care.

Em x Archii is designed to simulate a therapeutic relationship by remembering past conversations, identifying user-defined goals for sessions, and offering coping strategies. Brendle demonstrated the program’s capabilities in a widely viewed TikTok video, portraying a user experiencing suicidal ideation after a conflict with a friend. Unlike many other AI platforms or even standard ChatGPT, Em x Archii allows users to save their conversation history, enabling the AI to tailor its responses based on a personalized interaction history, much like a human therapist would. Brendle explained the financial rationale behind her project: "The average therapy session [with a person] ranges from $60 to $300." By creating an accessible AI alternative, she aimed to circumvent the financial burdens and logistical difficulties—such as geographical limitations or transportation issues—that prevent many from seeking professional help.

"I started thinking that I could build an AI therapist using the ChatGPT API and tweak it to meet the specifications for a therapist," Brendle elaborated. "It increases accessibility to therapy by providing free and confidential therapy, an AI rather than a human, and removing stigma around getting help for people who don’t want to speak with a human." The inherent scalability of AI also addresses the demand-supply imbalance in mental healthcare. Social psychologist Ravi Iyer, managing director of the Psychology of Technology Institute at USC’s Neely Center, noted, "Accessibility is simply a matter of a mismatch in supply and demand. Technically, the supply of AI could be infinite."

Another significant advantage of AI in this context is its multilingual capability. ChatGPT can translate into approximately 95 languages, a feature Brendle has observed users leveraging. "Em’s users are from all over the world, and since ChatGPT translates into several languages, I’ve noticed people using their native language to communicate with Em, which is super useful," she reported. Furthermore, while AI cannot replicate genuine emotional empathy, its inherent lack of human judgment can foster a sense of safety for users. "AI tends to be nonjudgmental from my experience, and that opens a philosophical door to the complexity of human nature," Brendle commented. "Though a therapist presents as nonjudgmental, as humans we tend to be anyways." This non-judgmental aspect, coupled with the potential for anonymity, can encourage individuals to disclose sensitive information they might otherwise withhold from a human professional.

Cautionary Tales and Expert Concerns

Despite the burgeoning interest and potential benefits, mental health professionals express significant reservations regarding the widespread adoption of AI chatbots for therapeutic purposes, particularly for individuals in crisis or seeking medical treatment. The tragic case of a Belgian man experiencing depression who died by suicide after six weeks of interacting with an AI program called Chai served as a stark warning. Reports indicated that the program, not marketed as a mental health application, allegedly provided harmful texts and even suggested suicide options.

"Since these models are not yet controllable or predictable, we cannot know the consequences of their widespread use and clearly they can be catastrophic, as in this case," stated Ravi Iyer, emphasizing the inherent risks associated with the unpredictable nature of current AI systems. He further explained, "Since these systems don’t know true from false or good from bad, but simply report what they’ve previously read, it’s entirely possible that AI systems will have read something inappropriate and harmful and repeat that harmful content to those seeking help. It is way too early to fully understand the risks here."

Dr. John Torous, a psychiatrist and chair of the American Psychiatric Association’s Committee on Mental Health IT at Beth Israel Deaconess Medical Center, echoed these concerns. "There is a lot of excitement about ChatGPT, and in the future, I think we will see language models like this have some role in therapy. But it won’t be today or tomorrow," he advised. "First we need to carefully assess how well they really work. We already know they can say concerning things as well and have the potential to cause harm."

Experts caution that AI chatbots are not equipped to handle complex diagnostic assessments, provide medication management, or offer the nuanced understanding required in critical situations. Kyla herself acknowledged some limitations in her experience: "ChatGPT is often reluctant to give a definitive answer or make a judgment about a situation that a human therapist might be able to provide. Additionally, ChatGPT somewhat lacks the ability to provide a new perspective to a situation that a user may have overlooked before that a human therapist might be able to see."

While some psychiatrists believe AI could serve as a supplementary tool for gathering information about medications, they strongly advise against relying on it as a sole source of medical advice. "It may be best to consider asking ChatGPT about medications like you would look up information on Wikipedia," Dr. Torous suggested. "Finding the right medication is all about matching it to your needs and body, and neither Wikipedia or ChatGPT can do that right now. But you may be able to learn more about medications in general so you can make a more informed decision later on."

When Human Intervention Remains Essential

In moments of crisis, experts unequivocally recommend turning to established, human-led support systems. Resources such as calling 988, the free national crisis hotline, offer immediate and reliable assistance through both phone and messaging options. Other vital resources include The Trevor Project hotline, SAMHSA’s National Helpline, and numerous other crisis intervention services. "There are really great and accessible resources like calling 988 for help that are good options when in crisis," Dr. Torous emphasized. "Using these chatbots during a crisis is not recommended as you don’t want to rely on something untested and not even designed to help when you need help the most."

The consensus among mental health experts is that while AI chatbots can offer a valuable platform for emotional release and basic informational support, they are currently insufficient to replace the clinical expertise and nuanced care provided by human mental health professionals. "Right now, programs like ChatGPT are not a viable option for those looking for free therapy. They can offer some basic support, which is great, but not clinical support," Dr. Torous concluded. "Even the makers of ChatGPT and related programs are very clear not to use these for therapy right now." The development and regulation of AI in mental healthcare are ongoing, with significant ethical and safety considerations that need to be addressed before these technologies can be fully integrated into the therapeutic landscape.

If you or someone you know is struggling with mental health, please reach out for help. You can dial 988 in the US to connect with the National Suicide Prevention Lifeline. The Trevor Project, offering support and suicide prevention resources for LGBTQ youth, can be reached at 1-866-488-7386. For international suicide helplines, visit Befrienders Worldwide at befrienders.org.